Click here and press the right key for the next slide (or swipe left)

also ...

Press the left key to go backwards (or swipe right)

Press n to toggle whether notes are shown (or add '?notes' to the url before the #)

Press m or double tap to slide thumbnails (menu)

Press ? at any time to show the keyboard shortcuts

Origins of Mind : 03

A-tasks

Children fail

because they rely on a model of minds and actions that does not incorporate beliefs

non-A-tasks

Children pass

by relying on a model of minds and actions that does incorporate beliefs

dogma

the

of mindreading

a clue ...

‘chimpanzees understand … intentions … perception and knowledge’

‘chimpanzees probably do not understand others in terms of a fully human-like belief–desire psychology’

Call & Tomasello (2008, 191)

‘the core theoretical problem in ... animal mindreading is that ... the conception of mindreading that dominates the field ... is too underspecified to allow effective communication among researchers’

Heyes (2015, 321)

‘the core theoretical problem in ... animal mindreading is that ... the conception of mindreading that dominates the field ... is too underspecified to allow effective communication among researchers’

Heyes (2015, 321)

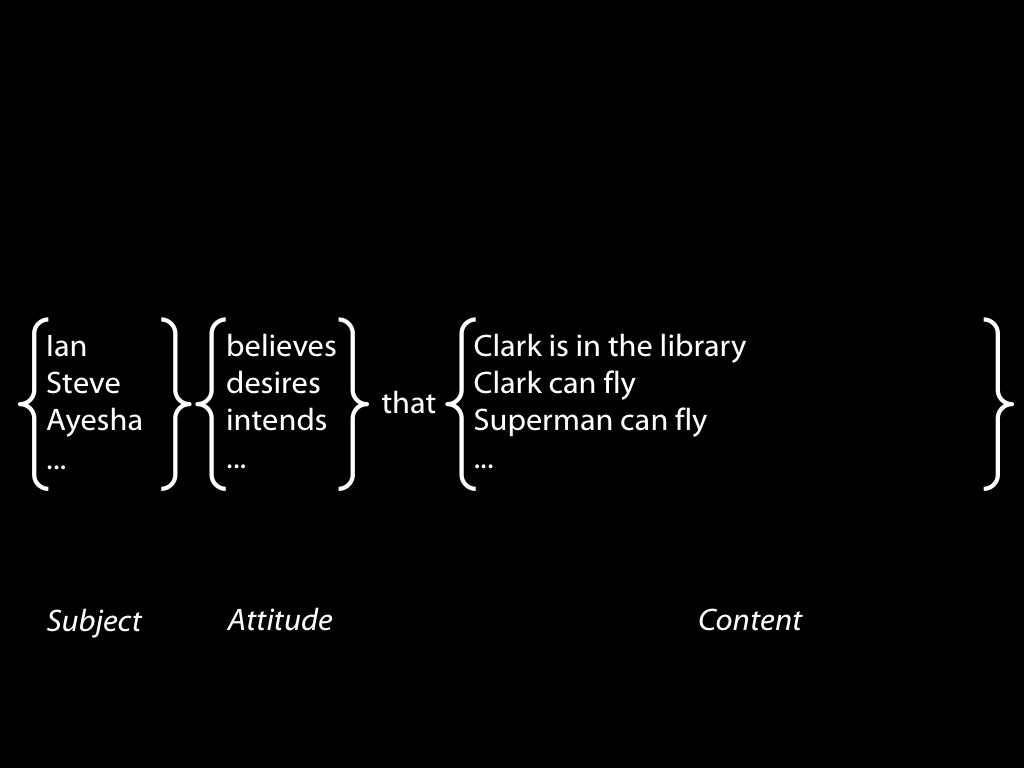

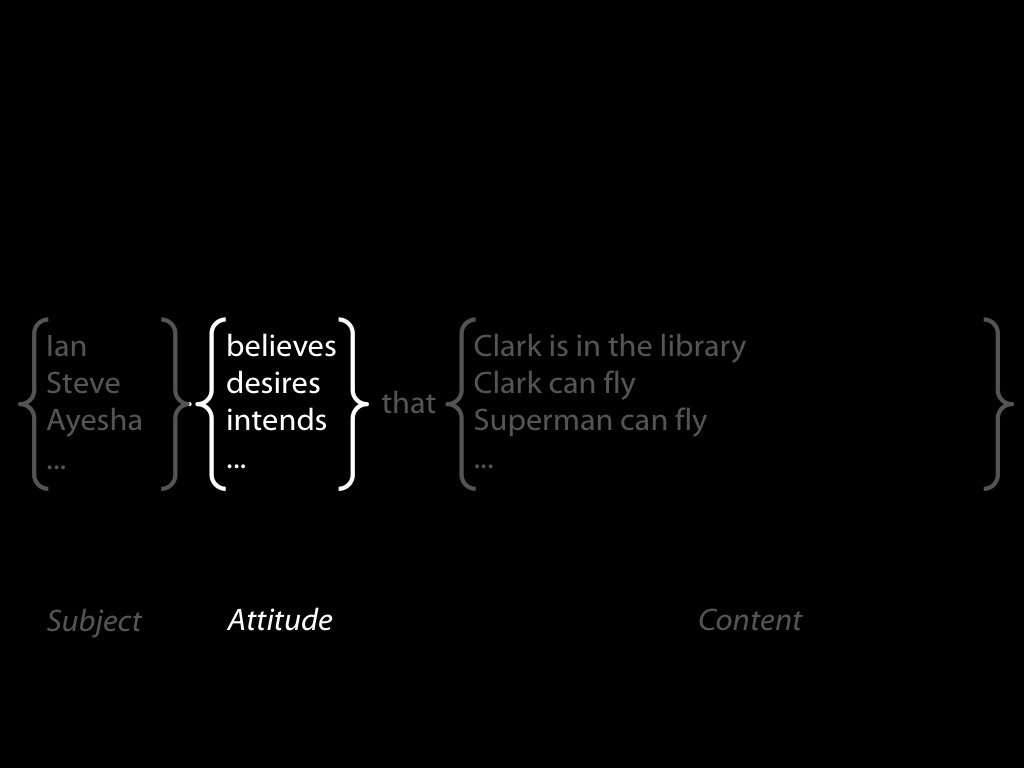

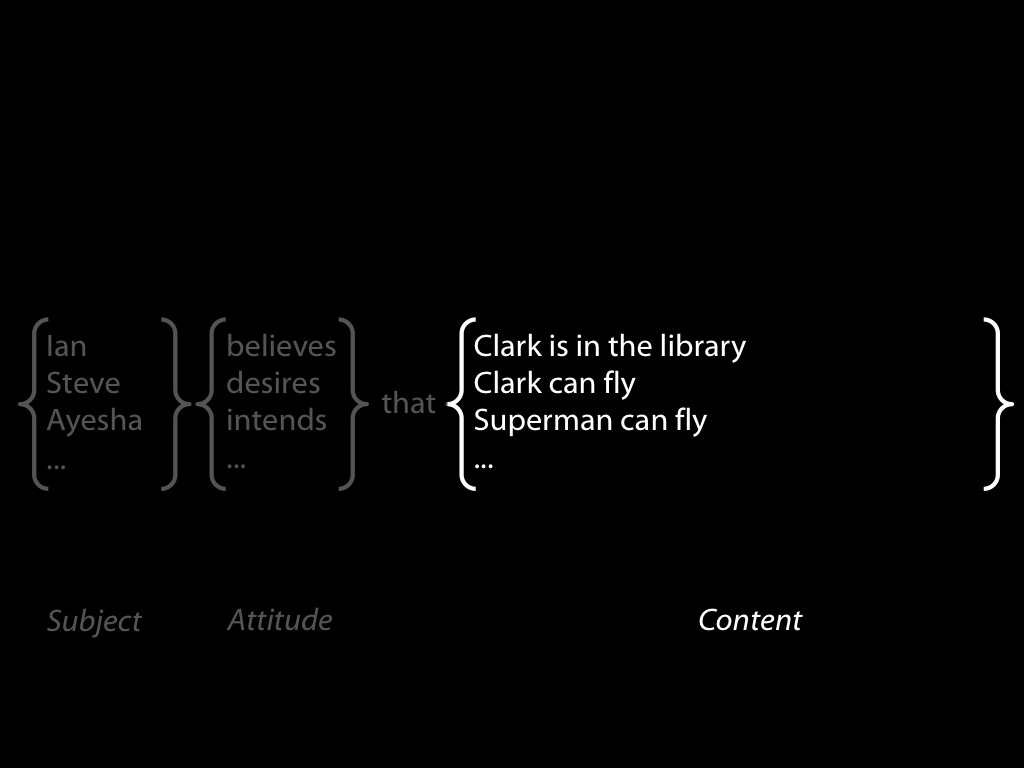

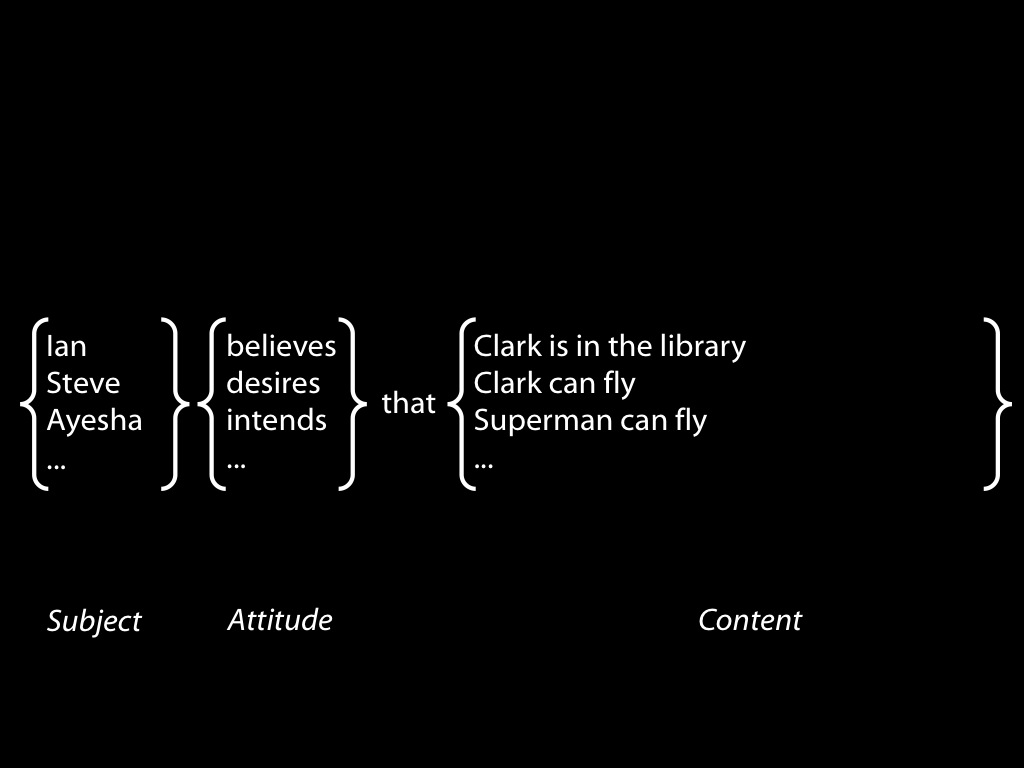

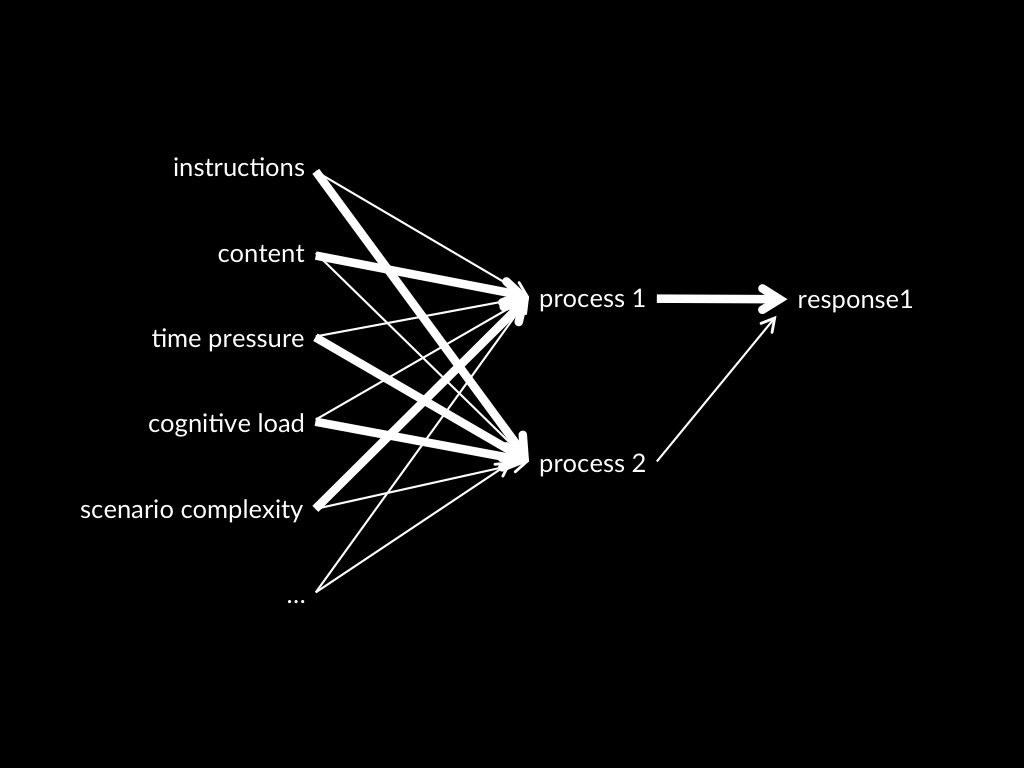

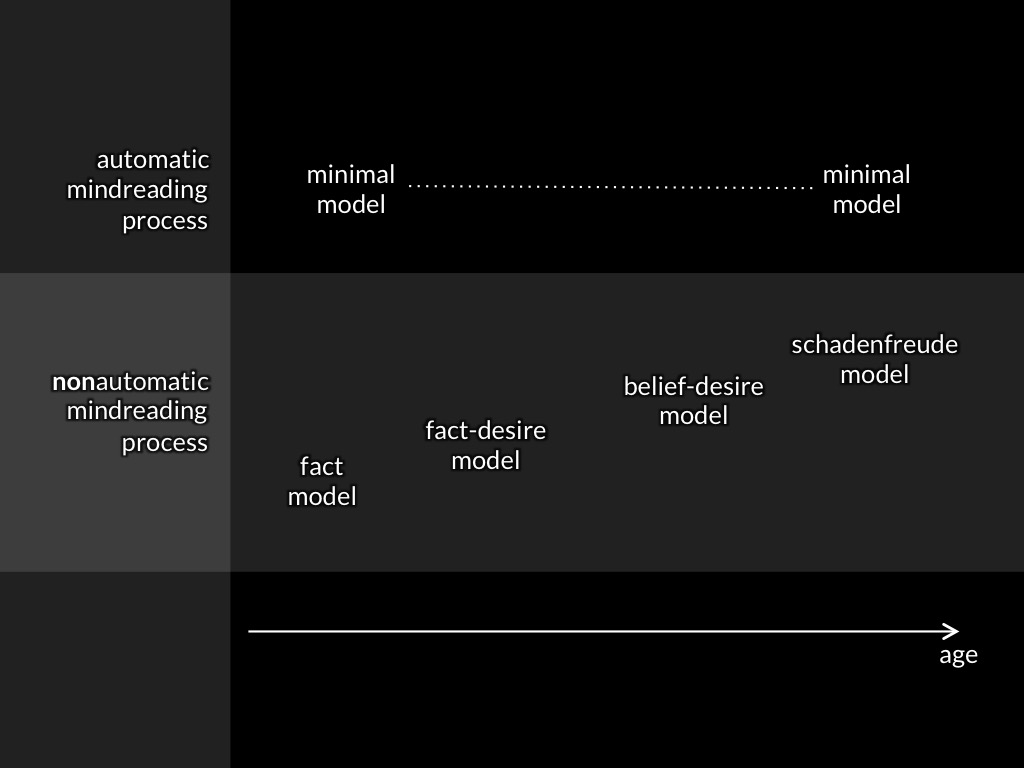

1. models

2. processes

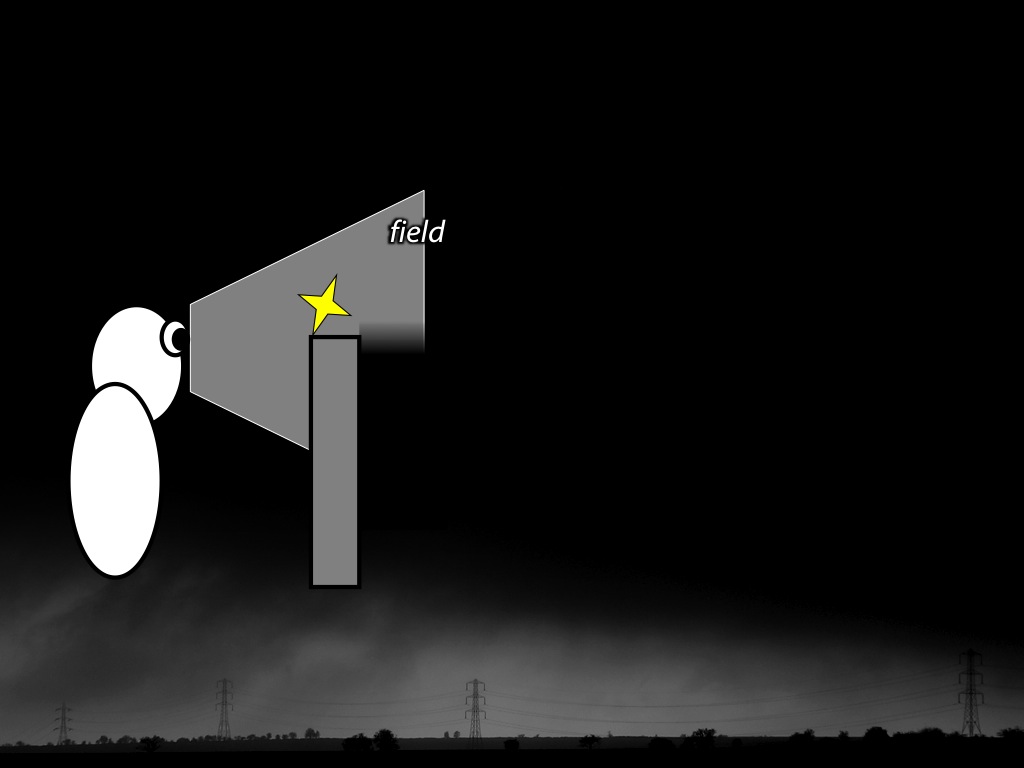

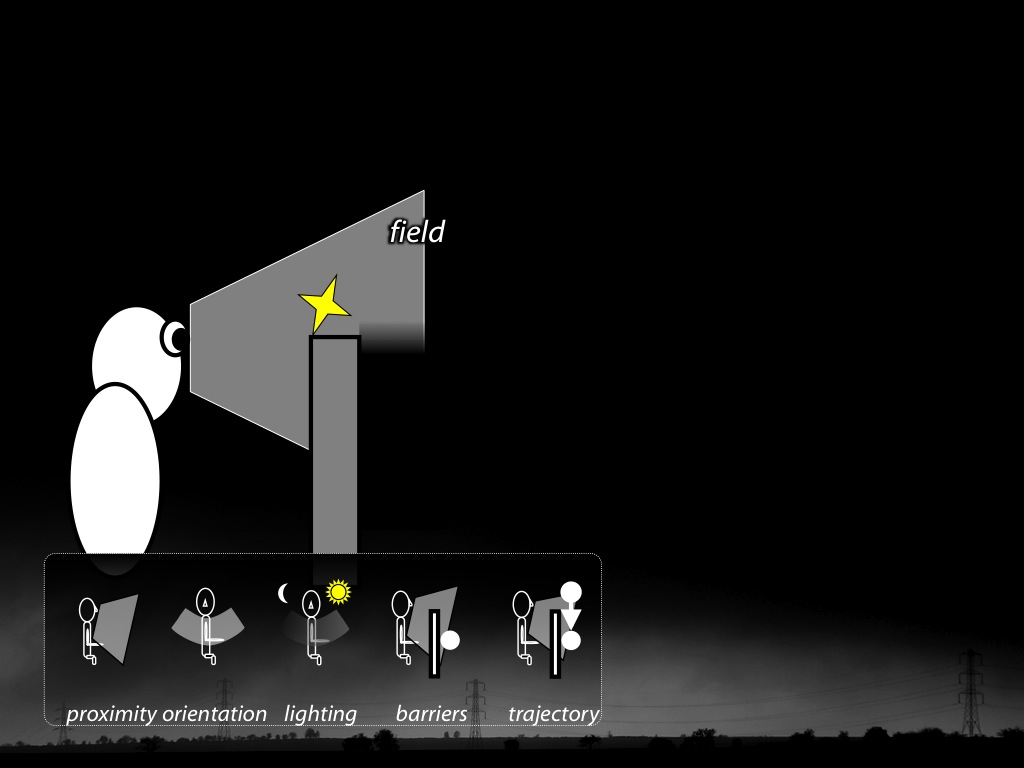

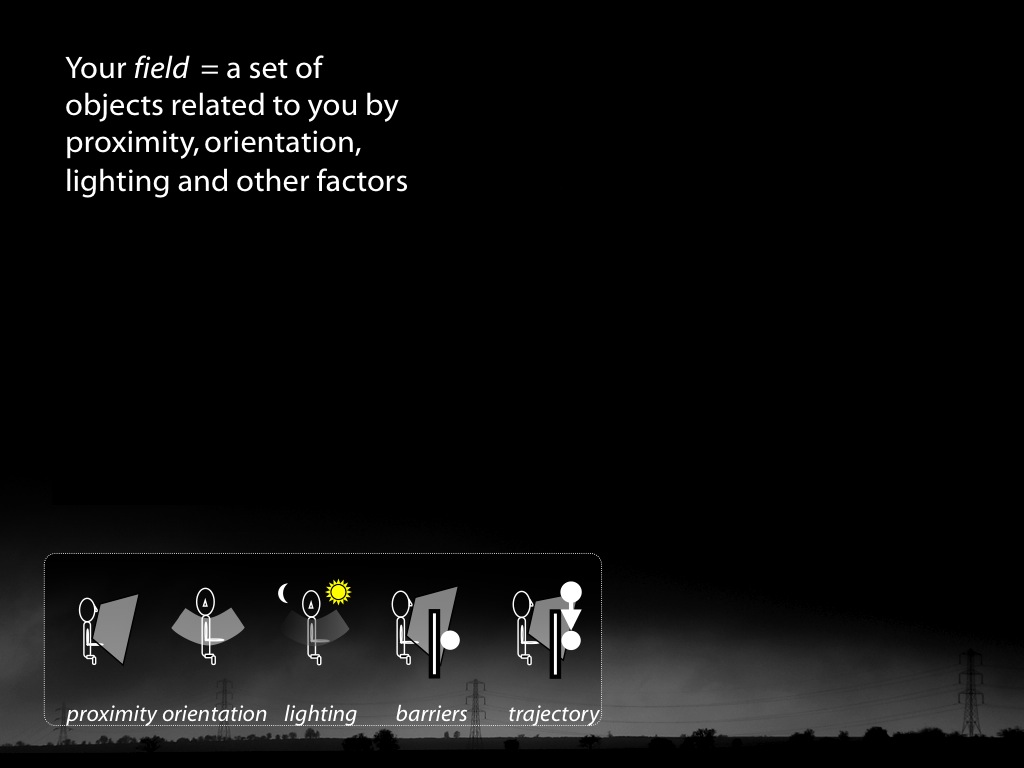

Minimal Theory of Mind

What models of minds and actions

underpin which mental state tracking processes?

What models of minds and actions

underpin which mental state tracking processes?

Fact:

Minimal theory of mind specifics a model of minds and actions,

one which could in principle characterise how infants (or nonhuman apes, corvids or other animals) track mental states.

Conjecture:

Nonhuman mindreading processes are characterised by a minimal model of minds and actions.

Evidence?

Q1

How do observations about tracking support conclusions about representing models?

Q2

Why are there dissociations in nonhuman apes’, human infants’ and human adults’ performance on belief-tracking tasks?

Signature Limits

signature limits generate predictions

Hypothesis:

Infants’ belief-tracking abilities rely on minimal models of the mental.

Prediction:

Infants’ belief-tracking is subject to the signature limits of minimal models.

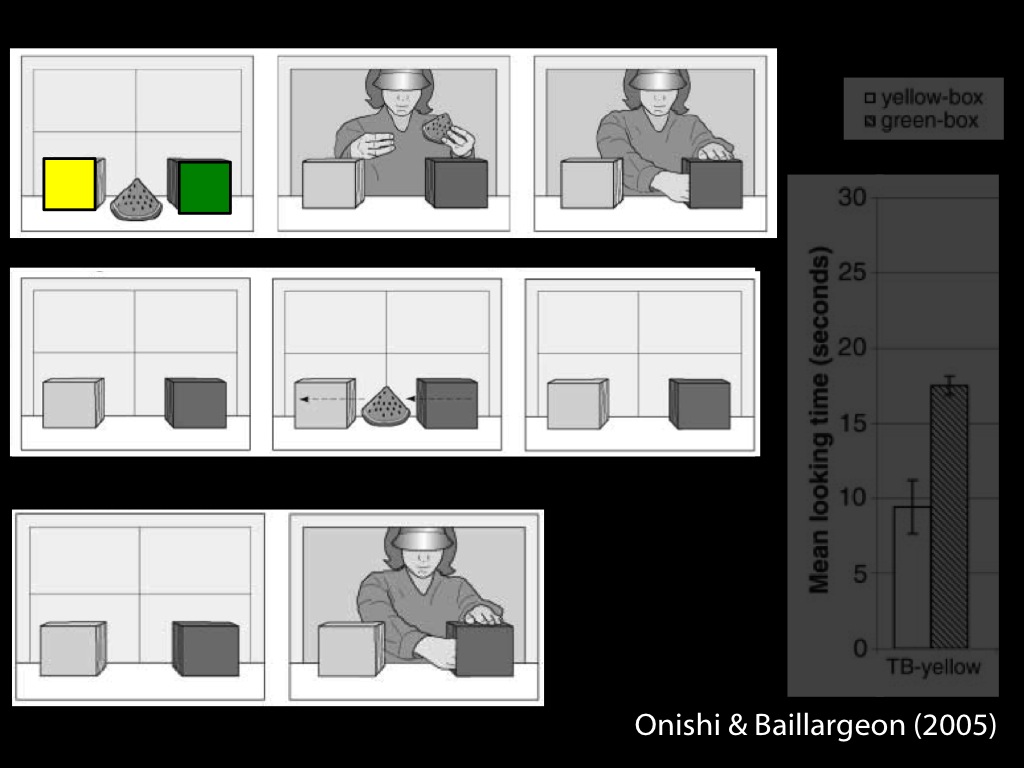

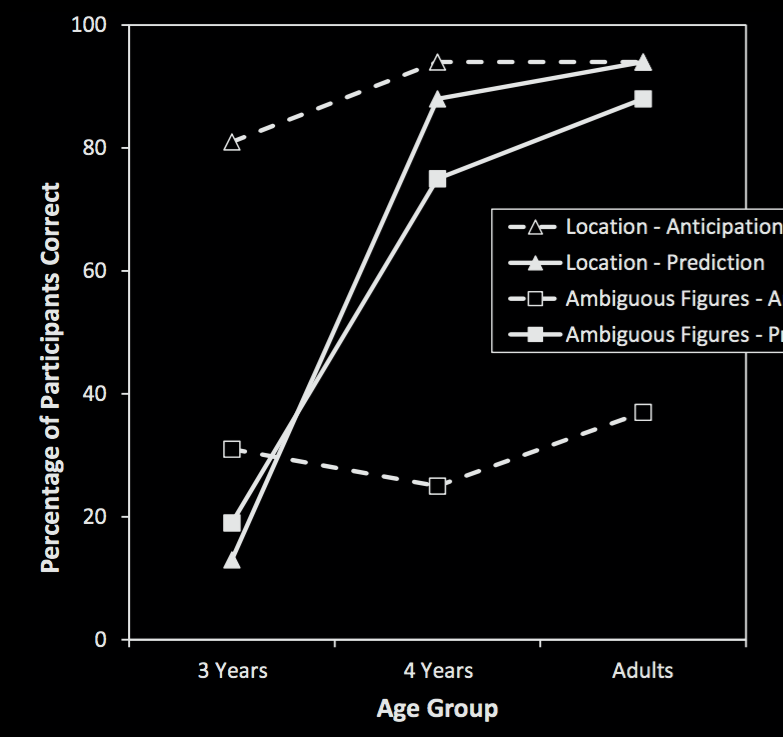

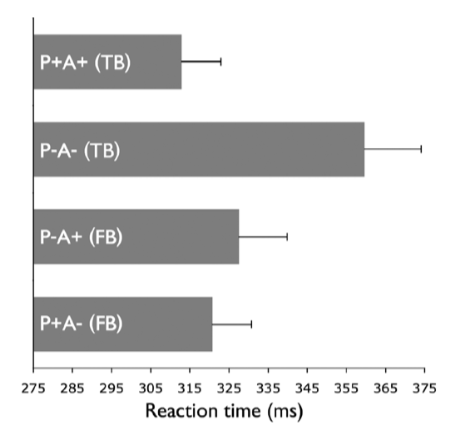

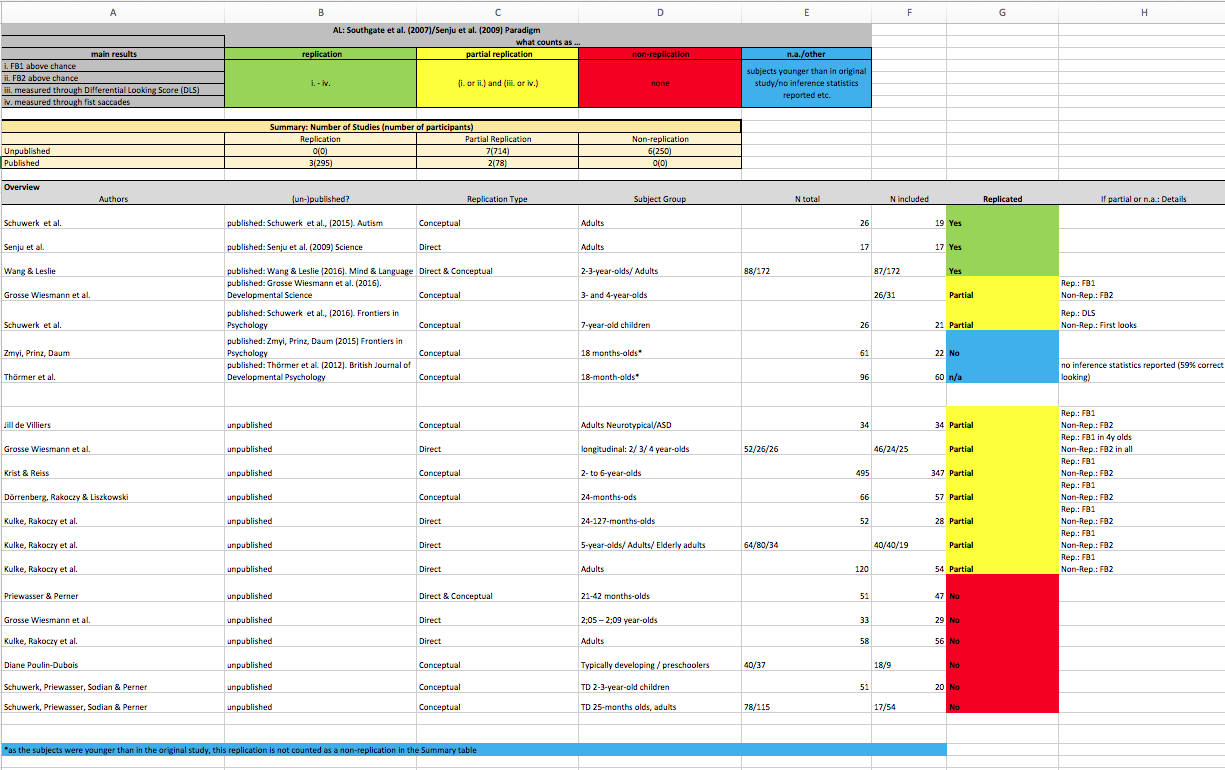

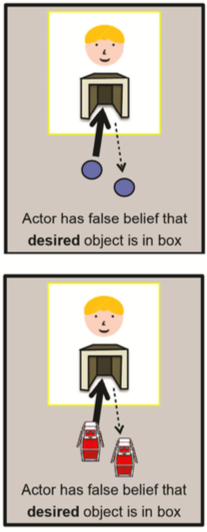

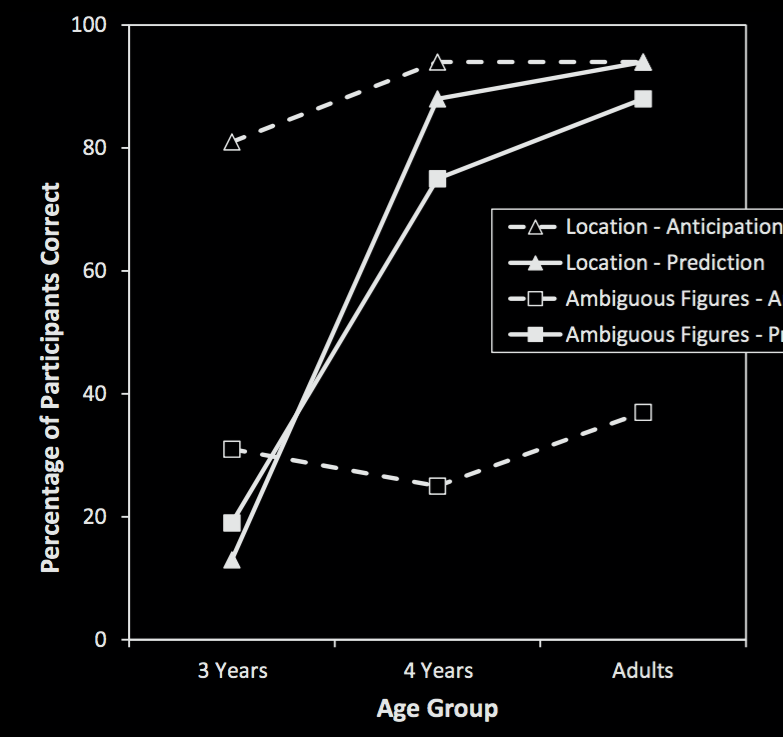

Low et al, 2014 figure 2

signature limits generate predictions

Hypothesis:

Infants’ belief-tracking abilities rely on minimal models of the mental.

Prediction:

Infants’ belief-tracking is subject to the signature limits of minimal models.

reidentifying systems:

same signature limit -> same process

Scott et al (2015, figure 2b)

‘the theoretical arguments offered [...] are [...] unconvincing, and [...] the data can be explained in other terms’

Carruthers (2015)

signature limits generate predictions

Hypothesis:

Infants’ belief-tracking abilities rely on minimal models of the mental.

Prediction:

Infants’ belief-tracking is subject to the signature limits of minimal models.

Q1

How do observations about tracking support conclusions about representing models?

Q2

Why are there dissociations in nonhuman apes’, human infants’ and human adults’ performance on belief-tracking tasks?

1. models [done]

2. processes

Automatic Mindreading in Adults

belief-tracking is sometimes but not always automatic

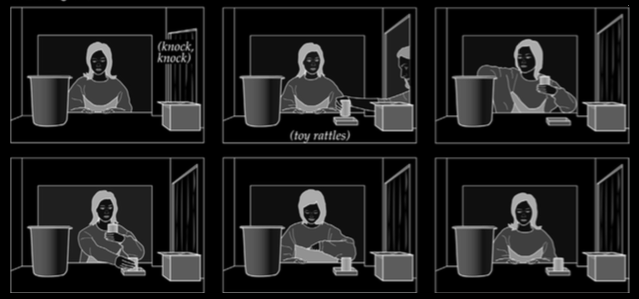

Kovacs et al, 2010

Kovacs et al, 2010

Kovacs et al, 2010

Kovacs et al, 2010

Kovacs et al, 2010

Kovacs et al, 2010

Kovacs et al, 2010

Kovacs et al, 2010

Kovacs et al, 2010

Kovacs et al, 2010

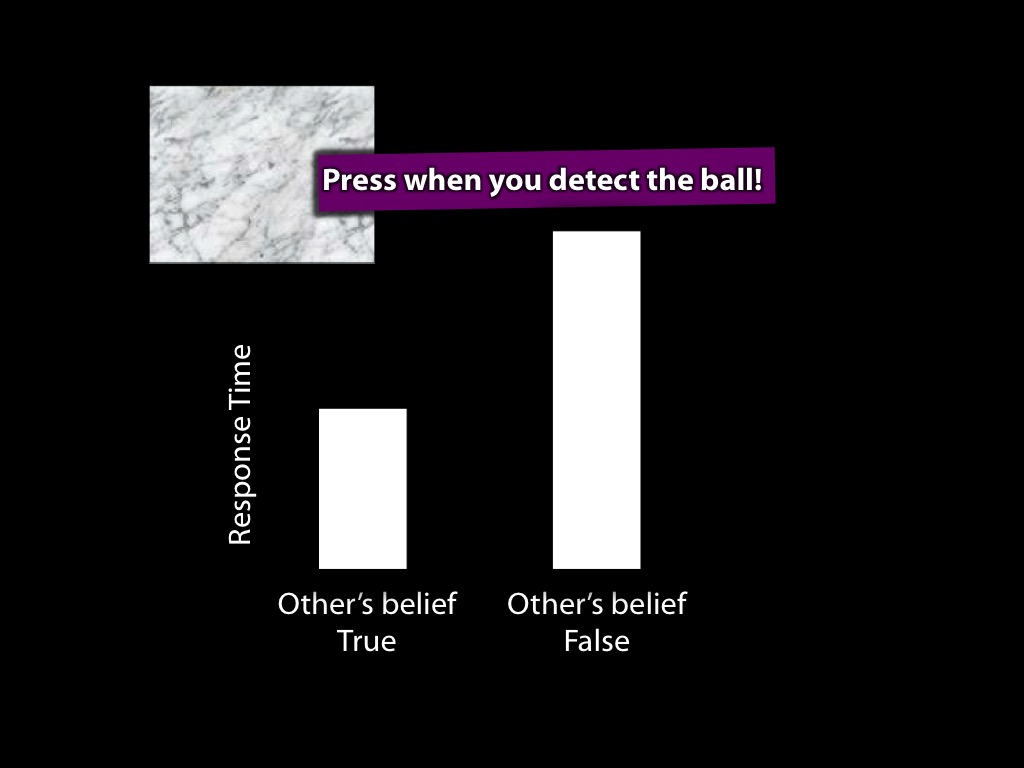

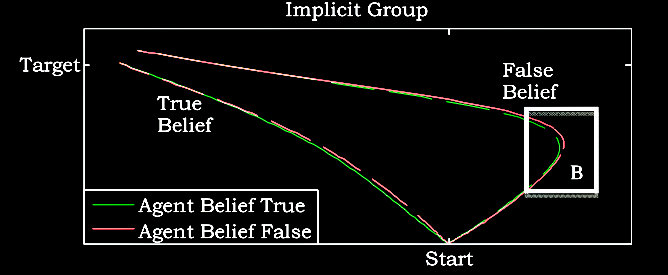

Kovacs et al, 2010 figure 1A

belief-tracking is sometimes but not always automatic

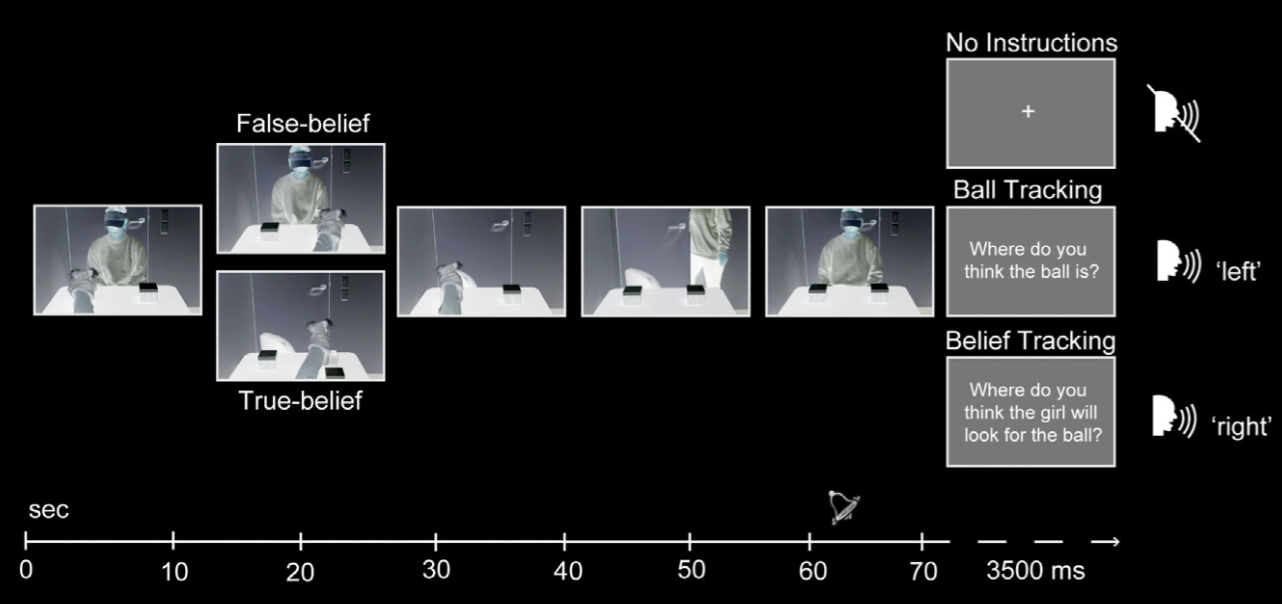

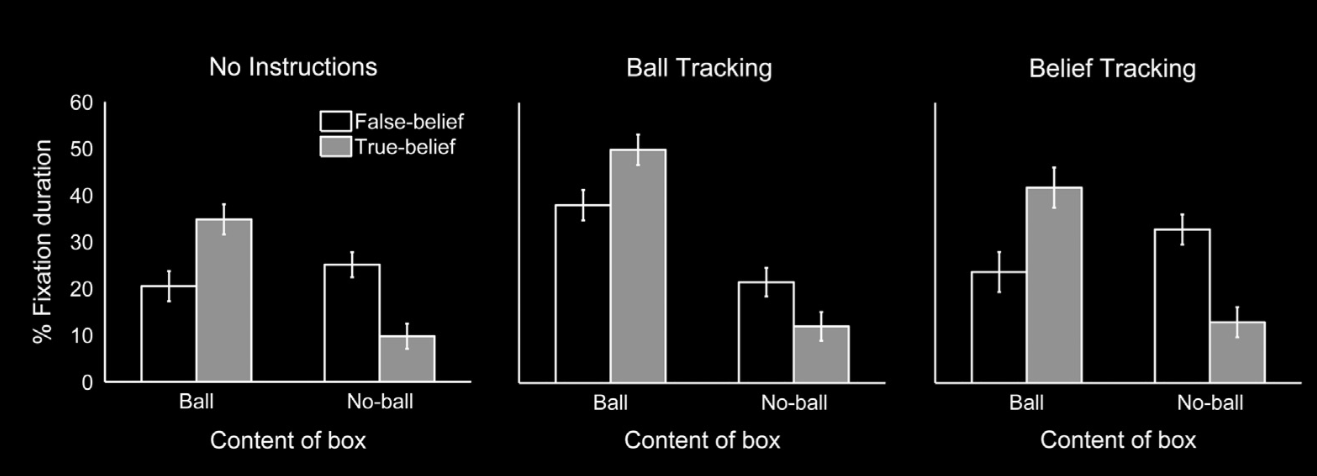

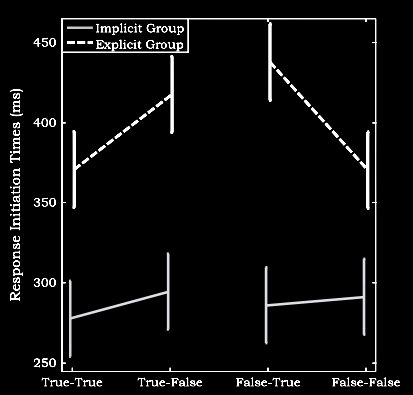

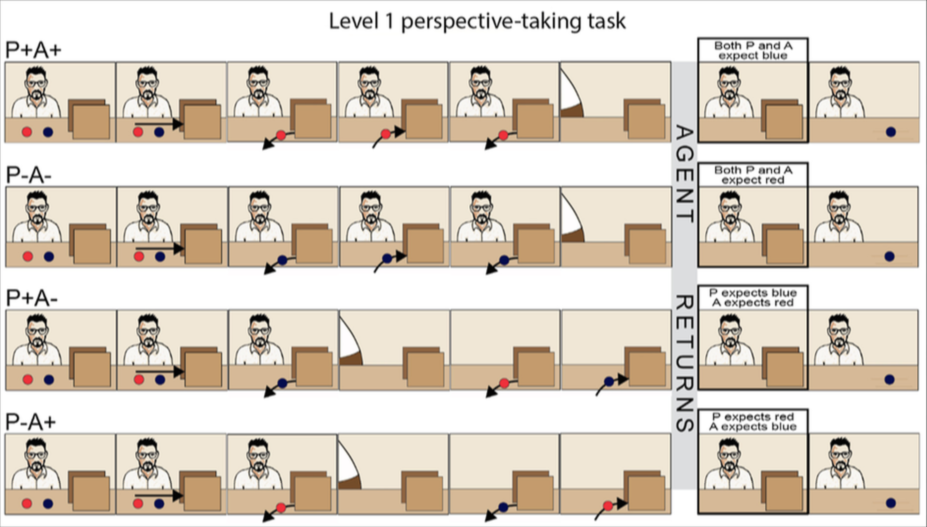

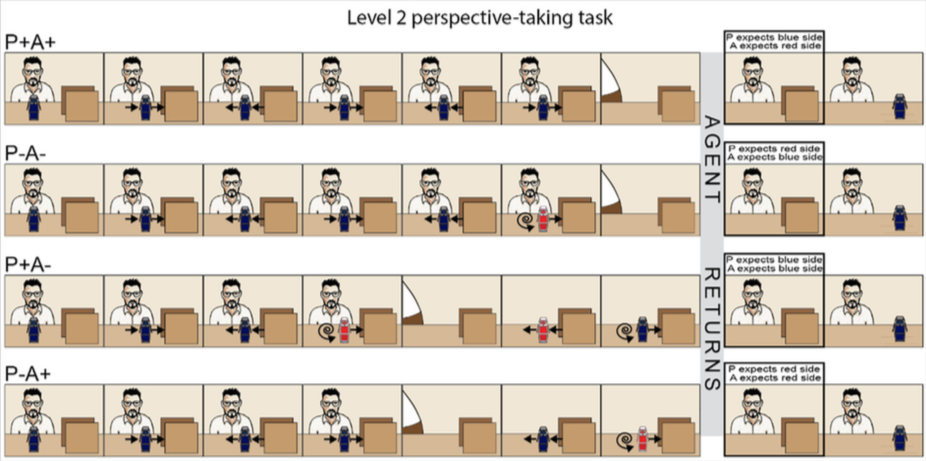

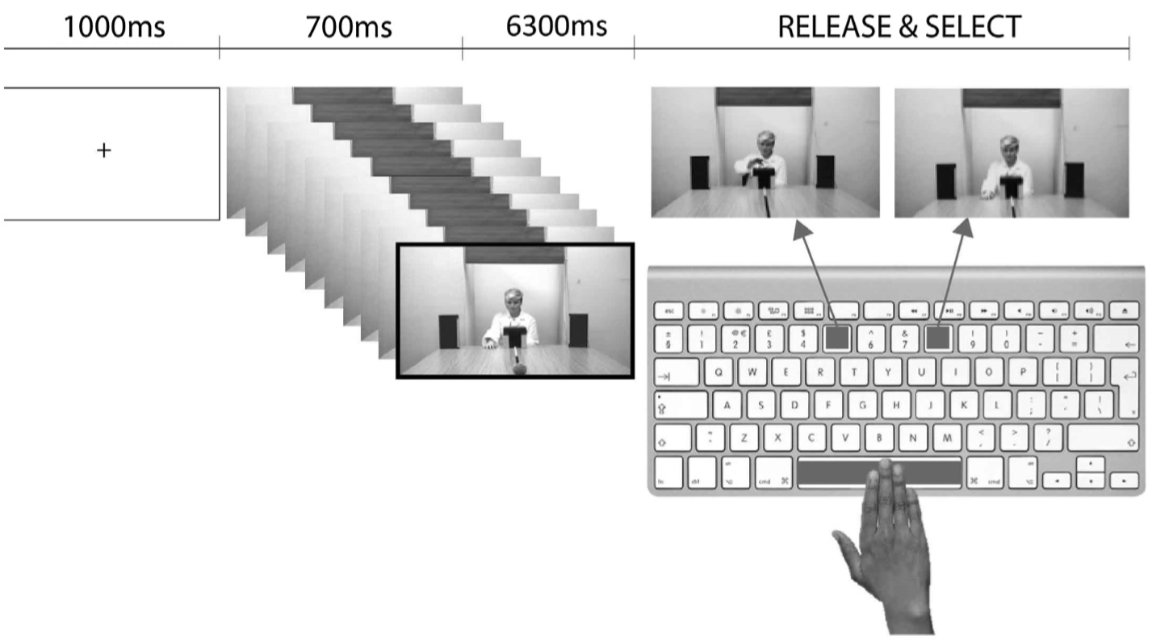

Schneider et al (2014, figure 1)

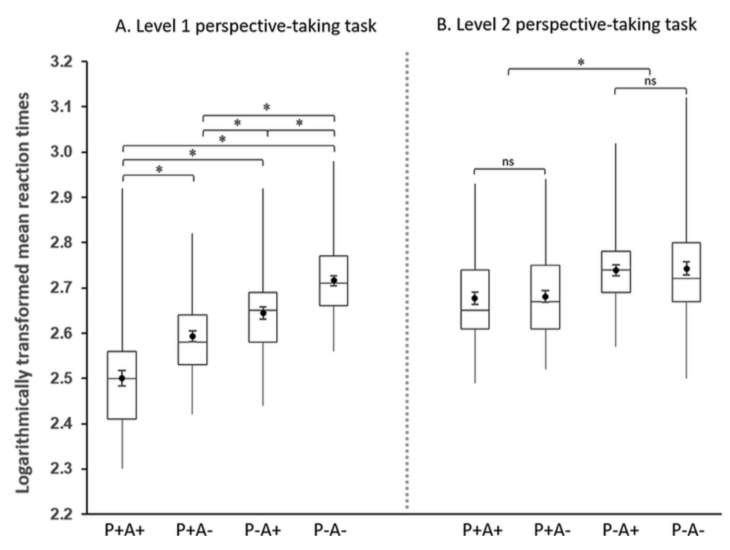

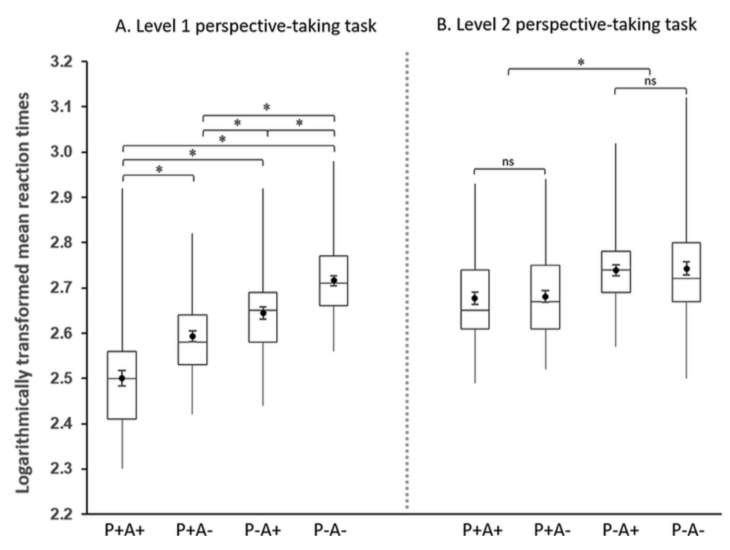

Schneider et al (2014, figure 3)

belief-tracking is sometimes but not always automatic

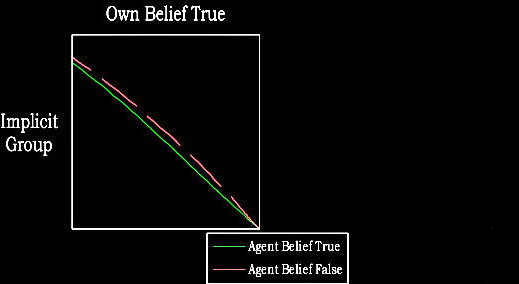

van der Wel et al (2014, figure 1)

van der Wel et al (2014, figure 2)

van der Wel et al (2014, figure 2)

belief-tracking is sometimes but not always automatic

aside: altercentric interference vs procative gaze vs movement trajectories

van der Wel et al (2014, figure 3)

‘they slowed down their responses when there was a belief conflict versus when there was not’

belief-tracking is sometimes but not always automatic

Back & Apperly (2010, fig 1, part)

belief-tracking is sometimes but not always automatic

-- can consume attention and working memory

-- can require inhibition

1. models [done]

2. processes

Q1

How do observations about tracking support conclusions about representing models?

Q2

Why are there dissociations in nonhuman apes’, human infants’ and human adults’ performance on belief-tracking tasks?

reidentifying systems:

same signature limit -> same process

signature limits generate predictions

Hypothesis:

Some automatic belief-tracking systems rely on minimal models of the mental.

Hypothesis:

Infants’ belief-tracking abilities rely on minimal models of the mental.

Prediction:

Automatic belief-tracking is subject to the signature limits of minimal models.

Prediction:

Infants’ belief-tracking is subject to the signature limits of minimal models.

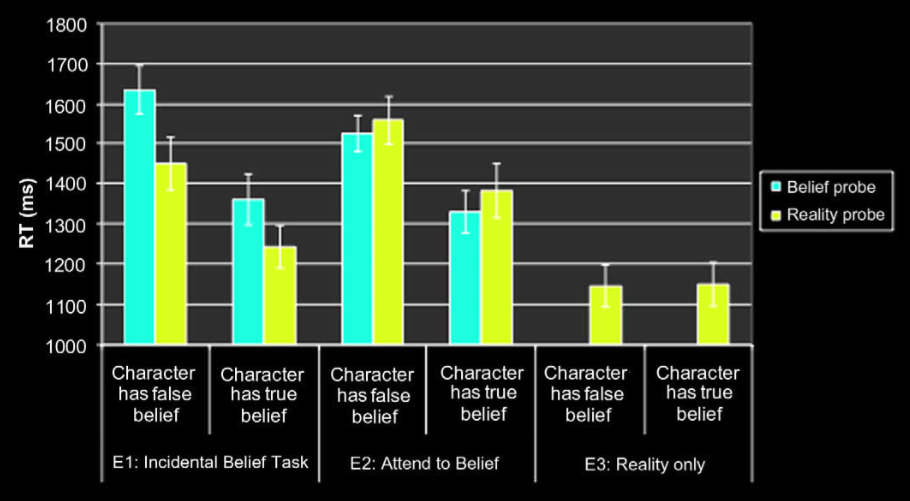

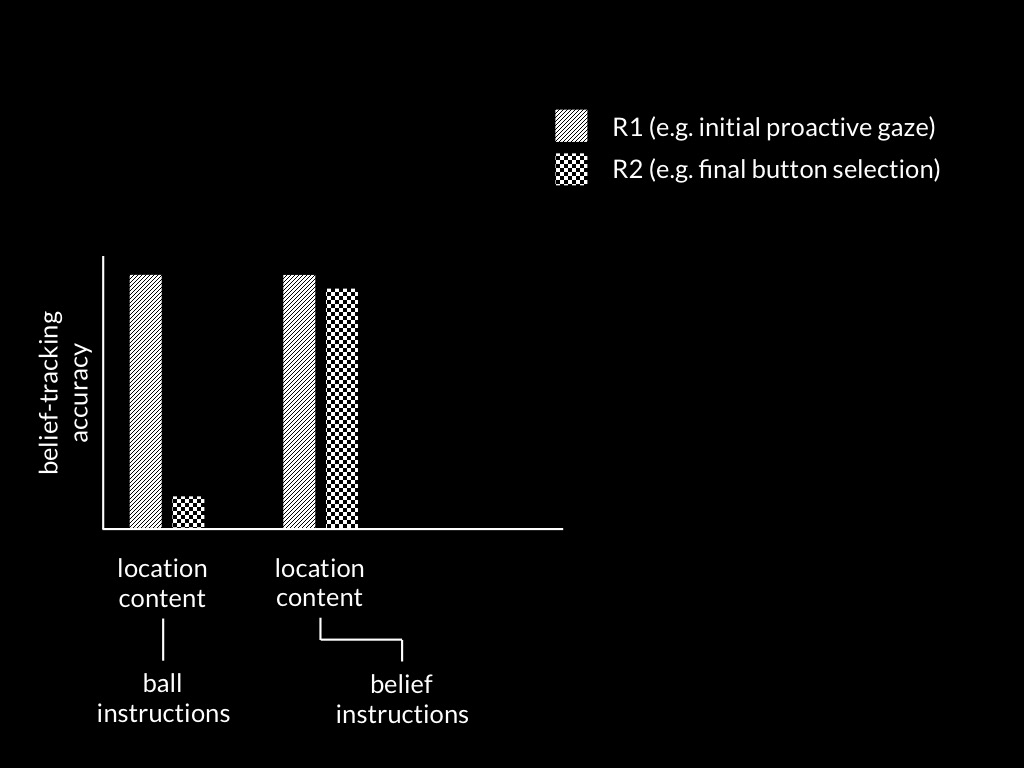

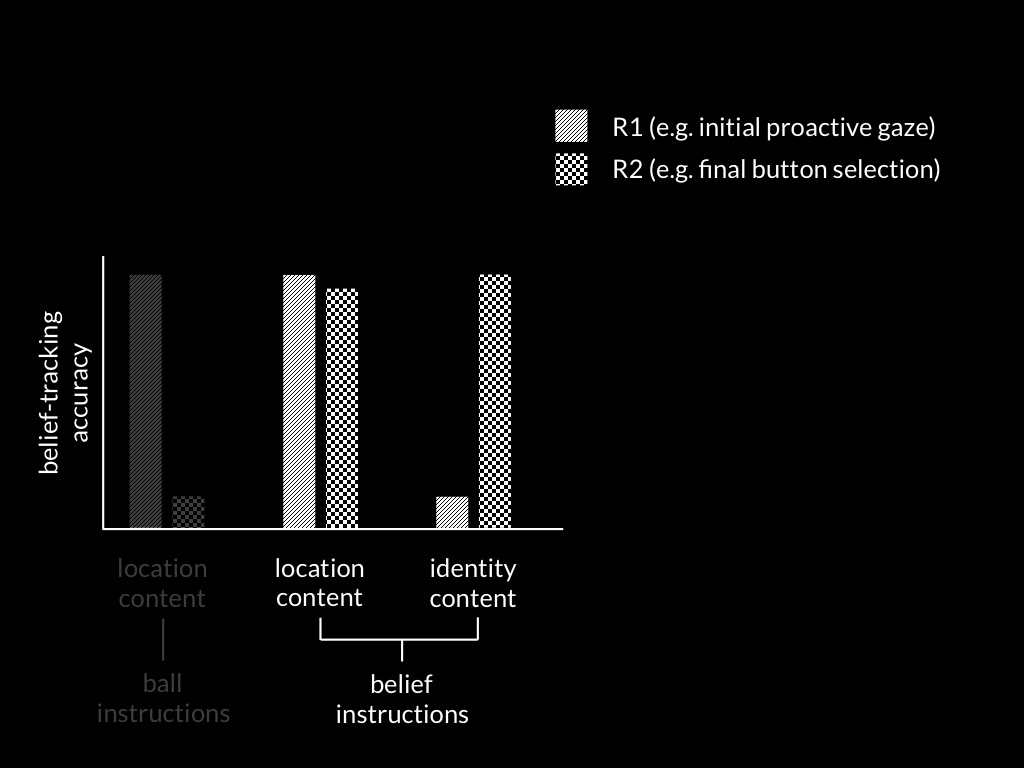

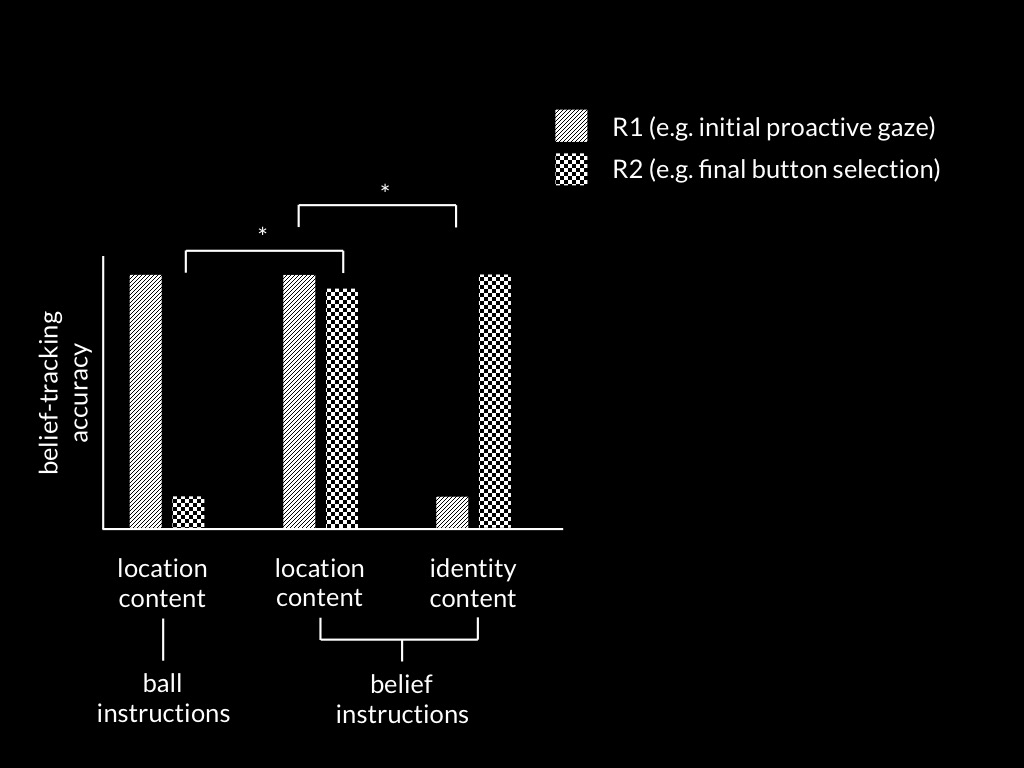

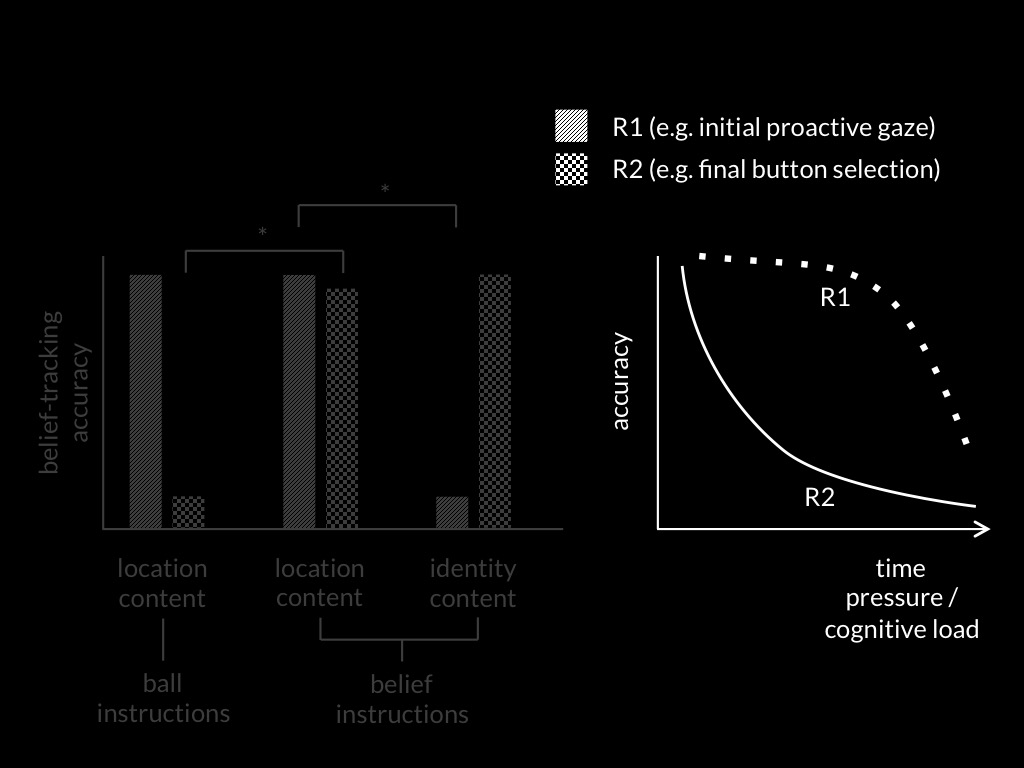

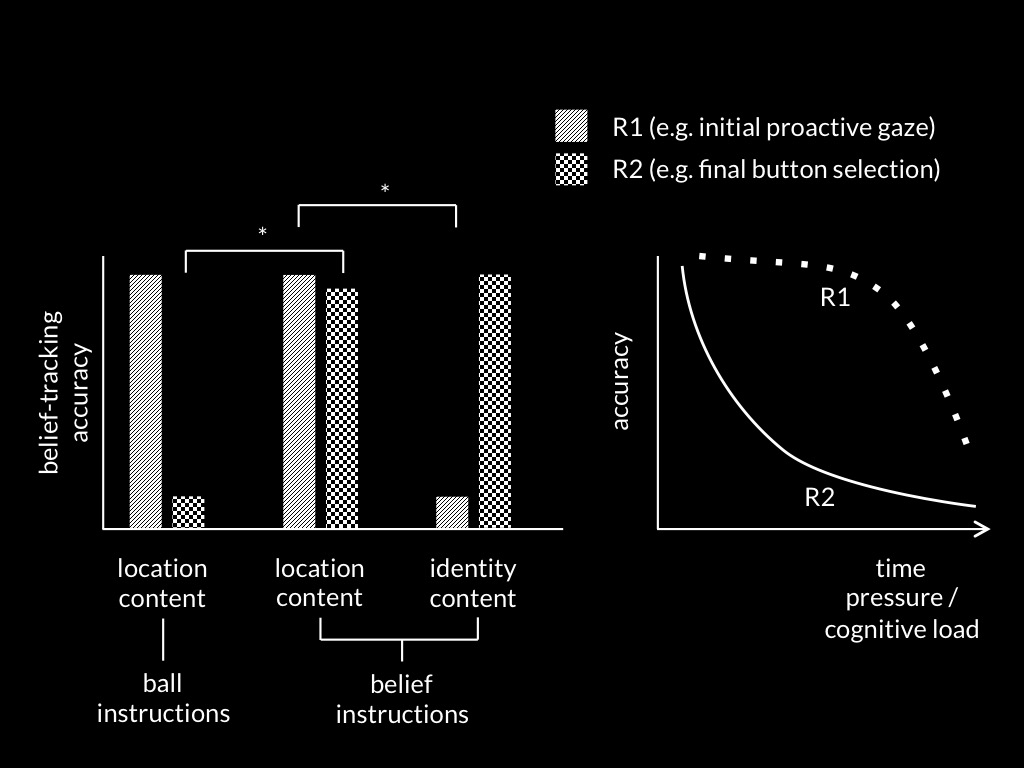

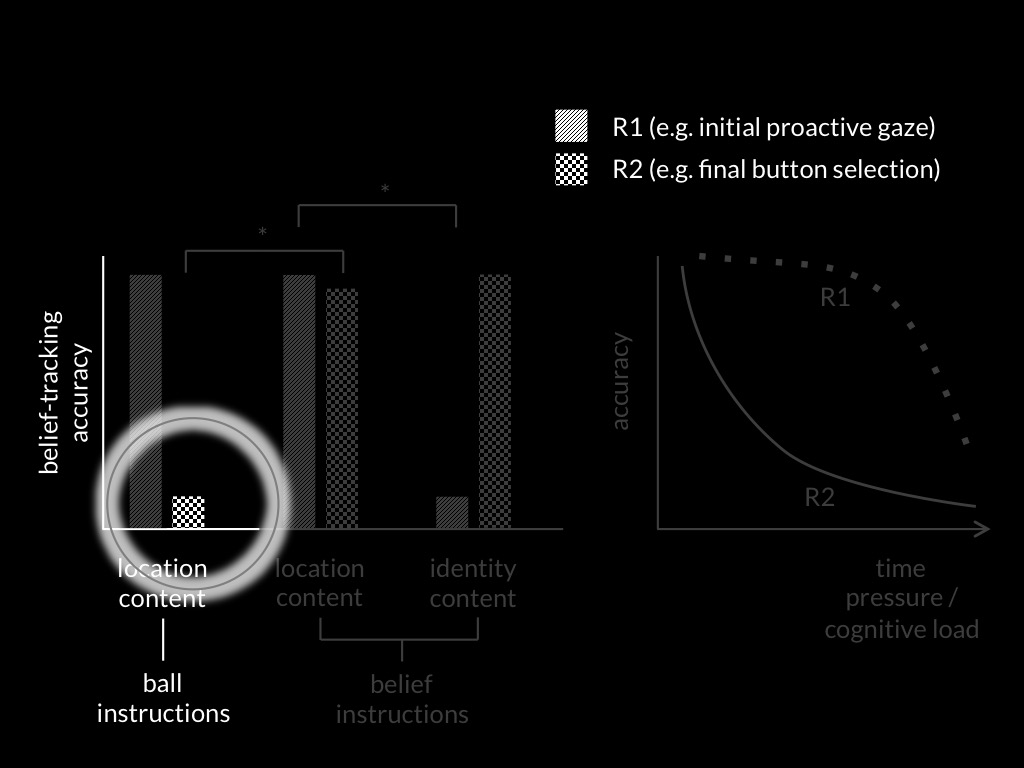

Edwards and Low, 2019 figure 1

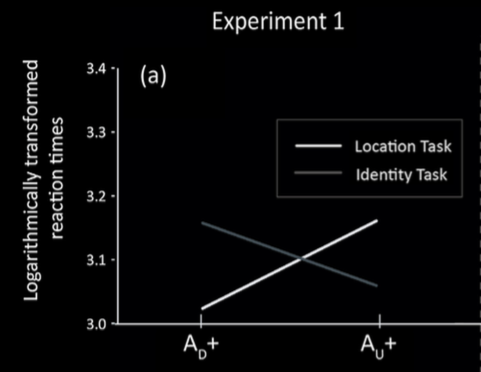

Edwards and Low, 2019 figure 4 (part)

Edwards and Low, 2019 figure 1

Edwards and Low, 2019 figure 4 (part)

signature limits generate predictions

Hypothesis:

Some automatic belief-tracking systems rely on minimal models of the mental.

Hypothesis:

Infants’ belief-tracking abilities rely on minimal models of the mental.

Prediction:

Automatic belief-tracking is subject to the signature limits of minimal models.

Prediction:

Infants’ belief-tracking is subject to the signature limits of minimal models.

reidentifying systems:

same signature limit -> same process

Q1

How do observations about tracking support conclusions about representing models?

Q2

Why are there dissociations in nonhuman apes’, human infants’ and human adults’ performance on belief-tracking tasks?

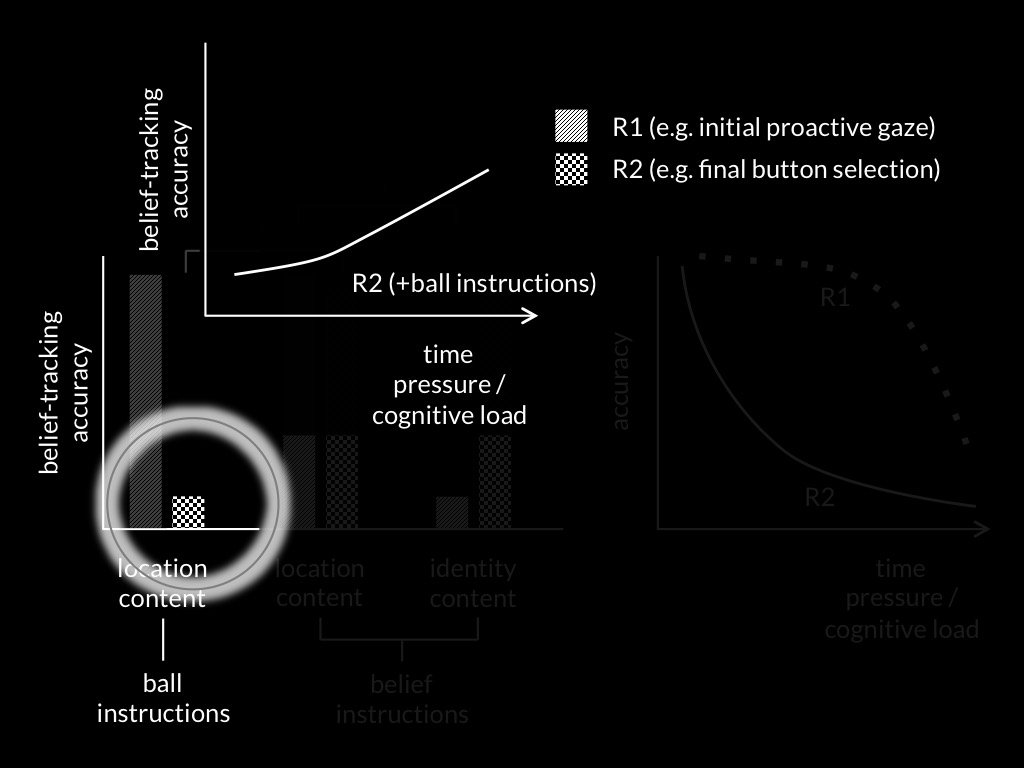

Edwards and Low, 2017 figure 7a

Edwards and Low, 2017 figure 7a

Edwards and Low, 2017 figure 7a

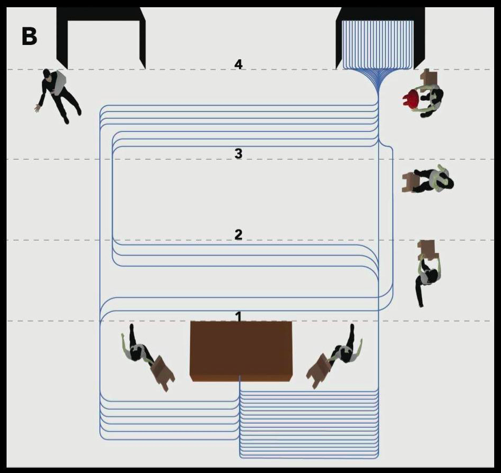

Maymon et al (pilot), figure 4B, used with permission

reidentifying systems:

same signature limit -> same process

An infant mindreading occurs as an automatic mindreading process in adults.

signature limits generate predictions

Hypothesis:

Some automatic belief-tracking systems rely on minimal models of the mental.

Hypothesis:

Infants’ belief-tracking abilities rely on minimal models of the mental.

Prediction:

Automatic belief-tracking is subject to the signature limits of minimal models.

Prediction:

Infants’ belief-tracking is subject to the signature limits of minimal models.

Q1

How do observations about tracking support conclusions about representing models?

Q2

Why are there dissociations in nonhuman apes’, human infants’ and human adults’ performance on belief-tracking tasks?

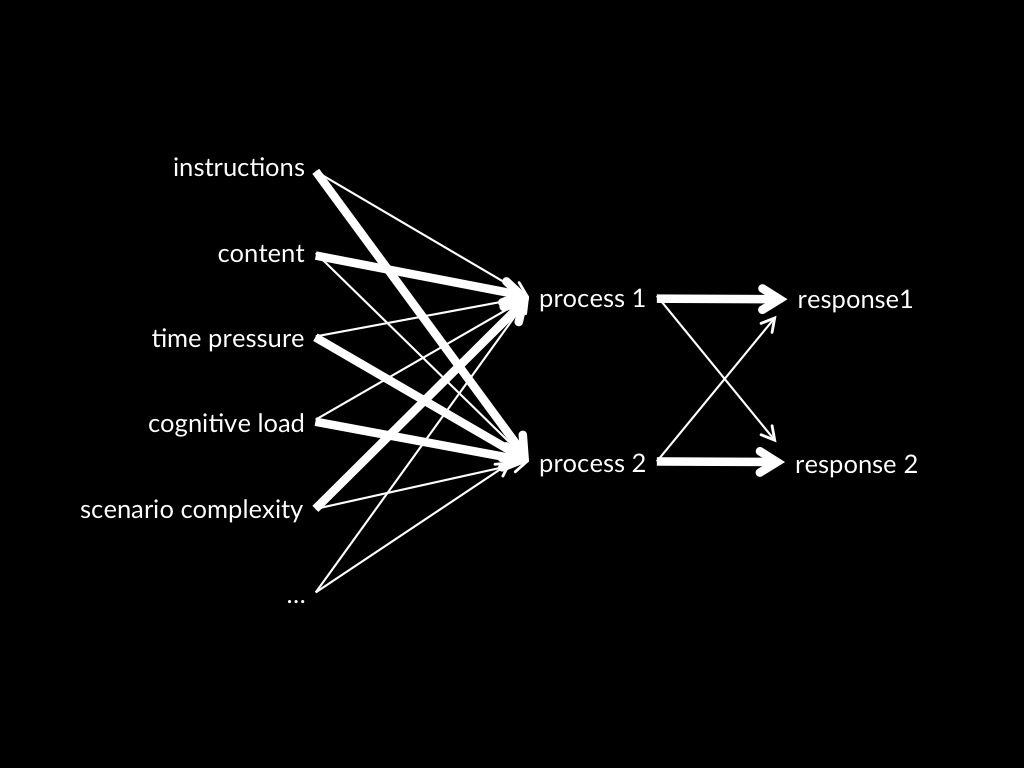

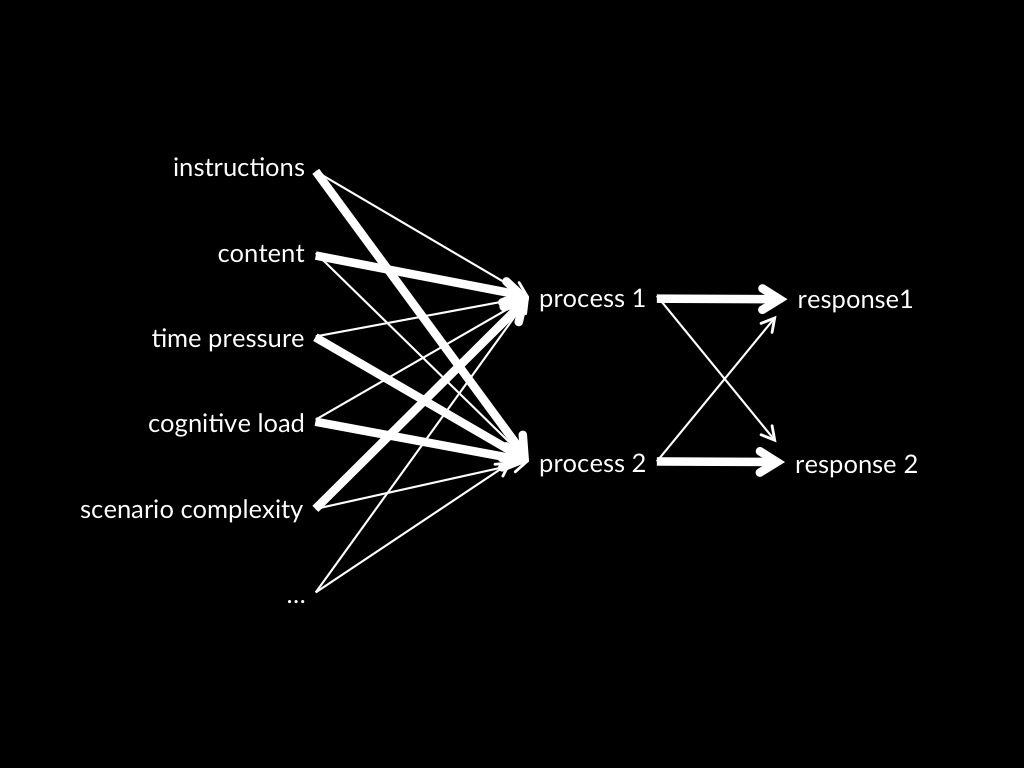

A Dual Process Theory of Mindreading

A-tasks

Children fail

because they rely on a model of minds and actions that does not incorporate beliefs

non-A-tasks

Children pass

by relying on a model of minds and actions that does incorporate beliefs

dogma

the

of mindreading

Q1

How do observations about tracking support conclusions about representing models?

Q2

Why are there dissociations in nonhuman apes’, human infants’ and human adults’ performance on belief-tracking tasks?

1. models [done]

2. processes

implicit / modular

/ ‘system-1’ / ...

innate

informationally encapsulated

domain specific

subject to limited accessibility

speedy

tacit

subpersonal

unconscious

...

‘it seems doubtful that the often long lists of correlated attributes should come as a package’

Adolphs (2010 p. 759)

‘we wonder whether the dichotomous characteristics … are … perfectly correlated

Keren and Schul (2009, p. 537)

a fresh start

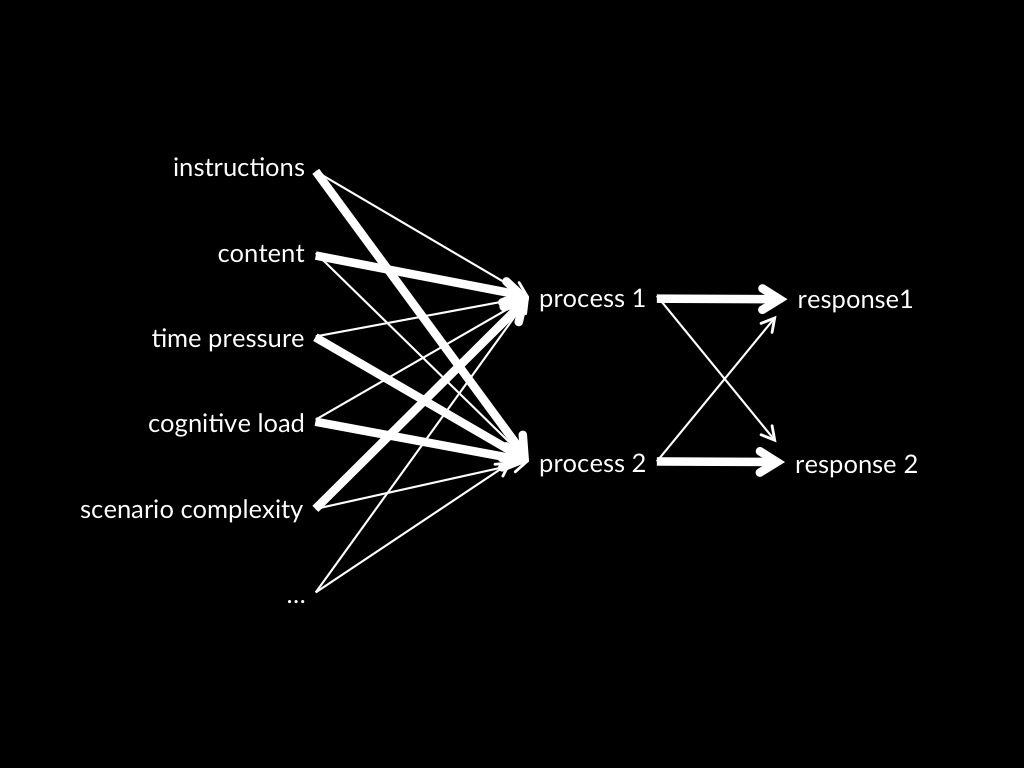

Process 1 -> Response 1

Process 2 -> Response 2

Dual Process Theory of Mindreading (core part)

Two (or more) mindreading processes are distinct:

the conditions which influence whether they occur,

and which outputs they generate,

do not completely overlap.

Process 1 -> Response 1

Process 2 -> Response 2

Dual Process Theory of Mindreading (core part)

Two (or more) mindreading processes are distinct:

the conditions which influence whether they occur,

and which outputs they generate,

do not completely overlap.

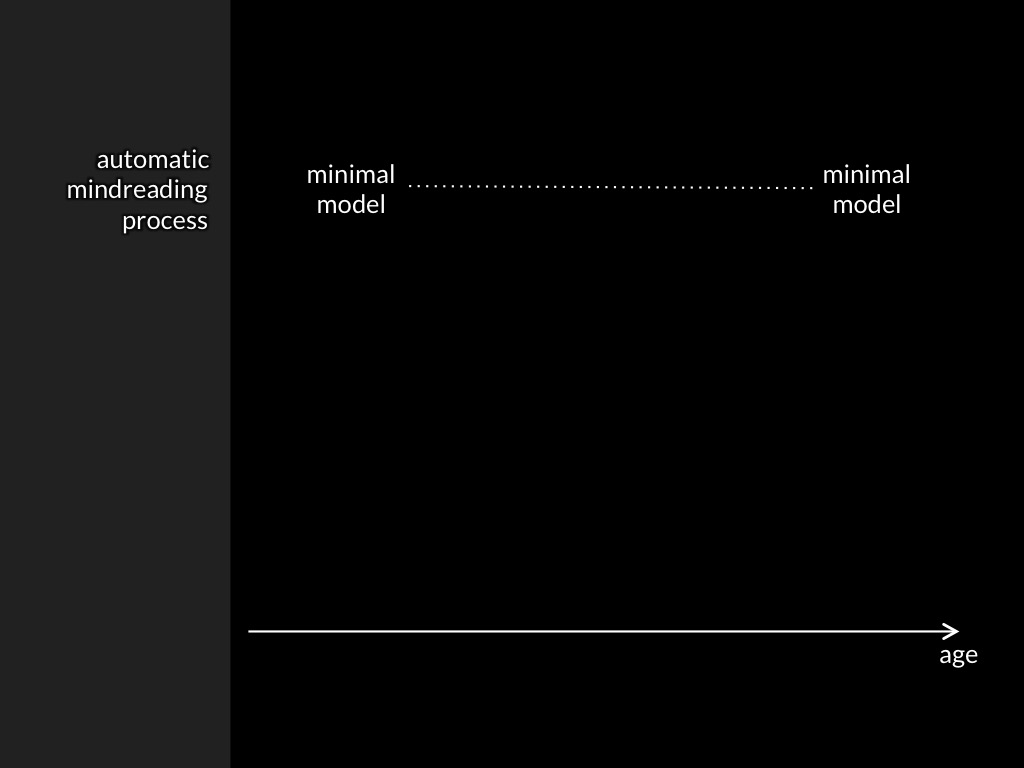

What about development?

Low et al, 2014 figure 2

Q1

How do observations about tracking support conclusions about representing models?

Q2

Why are there dissociations in nonhuman apes’, human infants’ and human adults’ performance on belief-tracking tasks?

A-tasks

Children fail

because they rely on a model of minds and actions that does not incorporate beliefs

non-A-tasks

Children pass

by relying on a model of minds and actions that does incorporate beliefs

dogma

the

of mindreading

Mindreading: Conclusions

of mindreading.

A-tasks

Children fail

because they rely on a model of minds and actions that does not incorporate beliefs

non-A-tasks

Children pass

by relying on a model of minds and actions that does incorporate beliefs

dogma

the

of mindreading

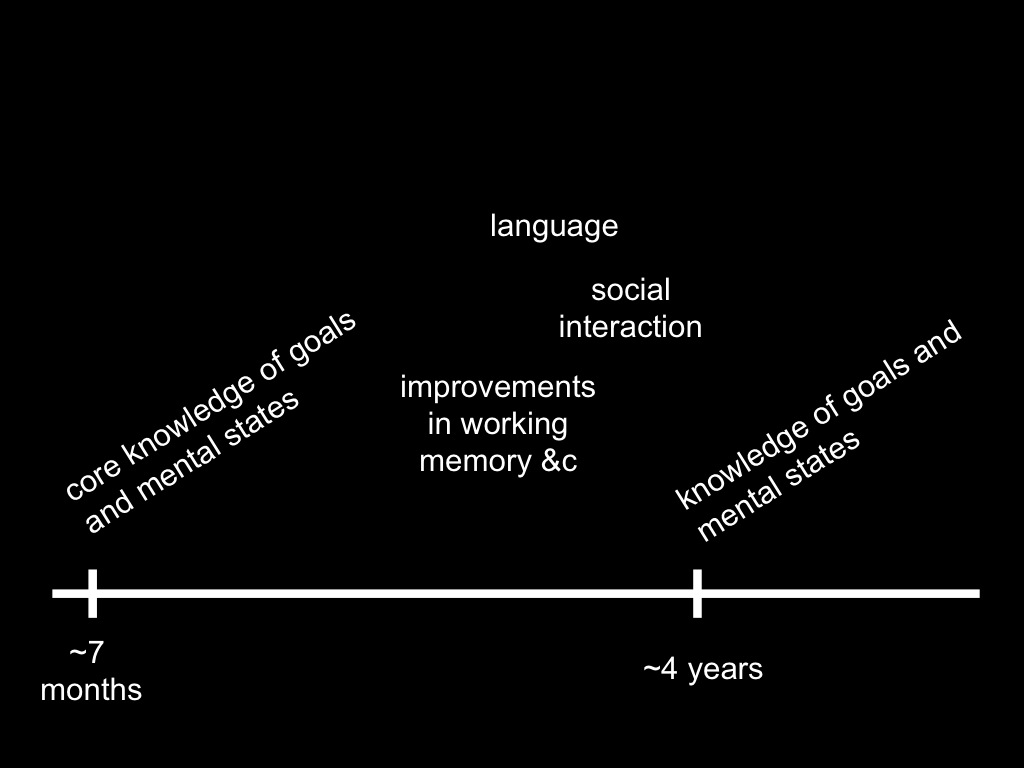

Conjecture

Infants have core knowledge of minds and actions.

Core knowledge is sufficient for success on non-A-tasks.

Infants lack knowledge of minds and actions.

Knowledge is necessary for success on A-tasks.

core knowledge of minds = the representations underpining automatic belief-tracking, which rely on a minimal model of the mental.

evidence

signature limits

A-tasks

Children fail

because they rely on a model of minds and actions that does not incorporate beliefs

non-A-tasks

Children pass

by relying on a model of minds and actions that does incorporate beliefs

dogma

the

of mindreading