Click here and press the right key for the next slide (or swipe left)

also ...

Press the left key to go backwards (or swipe right)

Press n to toggle whether notes are shown (or add '?notes' to the url before the #)

Press m or double tap to slide thumbnails (menu)

Press ? at any time to show the keyboard shortcuts

On the

Developmental Origins of

Knowledge of Physical Objects

On the

Developmental Origins of

Knowledge of Physical Objects

‘... ’tis past doubt,

that Men have in their Minds several Ideas ...:

It is in the first place to be enquired,

How he comes by them?’\citep[p.\ 104]{Locke:1975qo}

(Locke, 1689 p. 104)

On the

Developmental Origins of

Knowledge of Physical Objects

On the

Developmental Origins of

Knowledge of Physical Objects

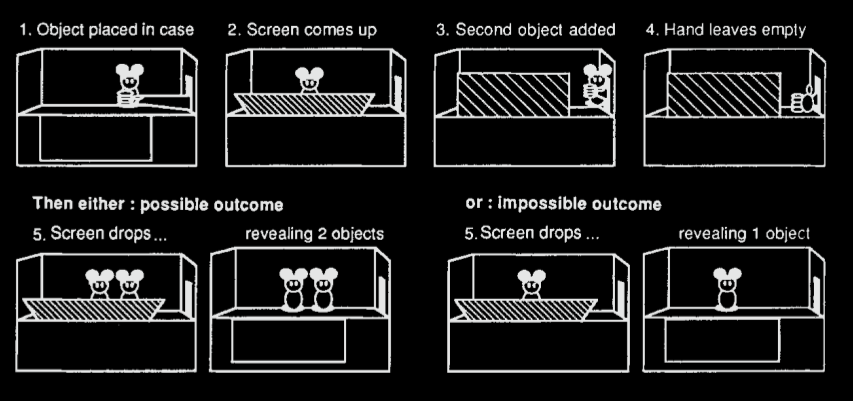

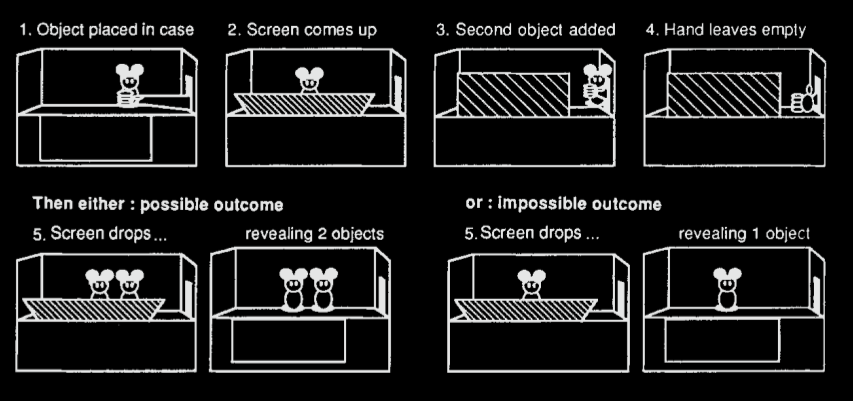

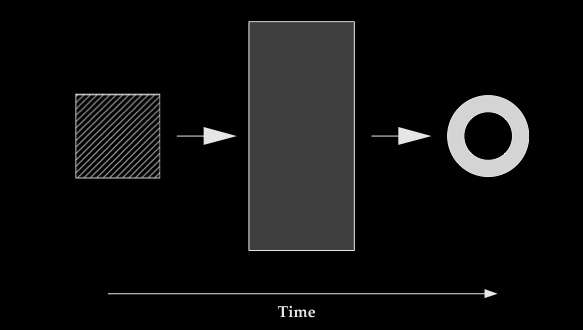

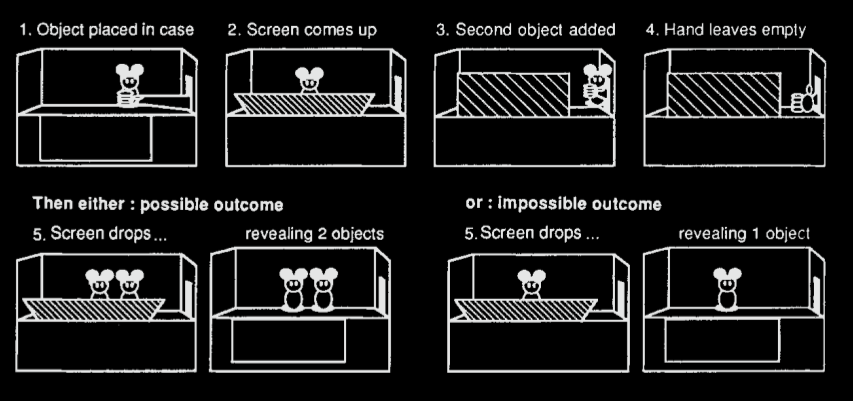

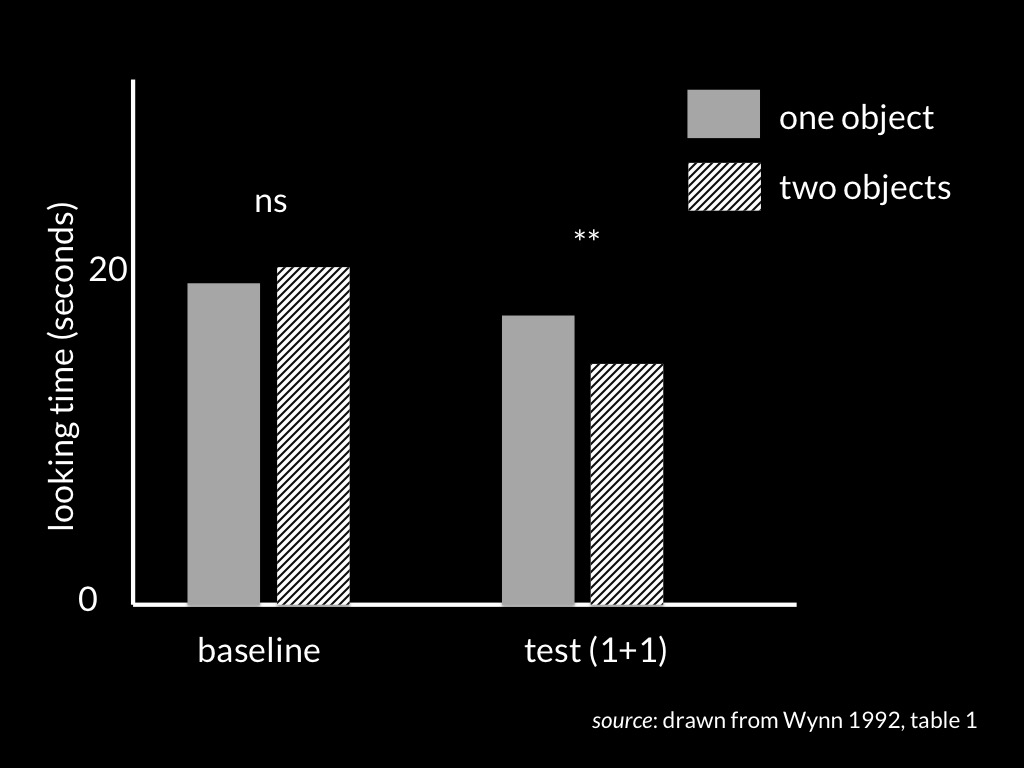

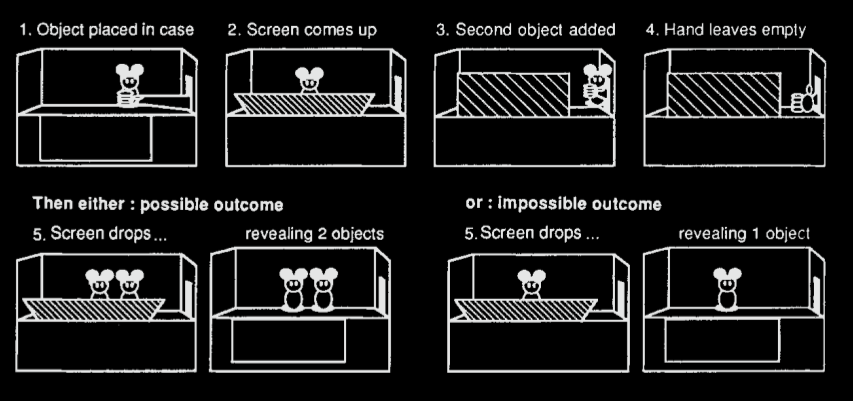

4- and 5-month-olds can track briefly occluded objects.

Wynn 1992, fig 1 (part)

4- to 6-month-olds can track briefly occluded objects

| scenario | method | source |

| 1 vs 2 objects | habituation | Spelke et al 1995 |

| one unperceived object constrains another’s movement | habituation | Baillargeon 1987 |

| where did I hide it? | violation-of-expectations | Wilcox et al 1996 |

| wide objects can’t disappear behind a narrow occluder | violation-of-expectations | Wang et al 2004 |

| when and where will it reappear? | anticipatory looking | Rosander et al 2004 |

| marker of object maintenanc | EEG | Kaufman et al 2005 |

How?

What is

core knowledge / belief?

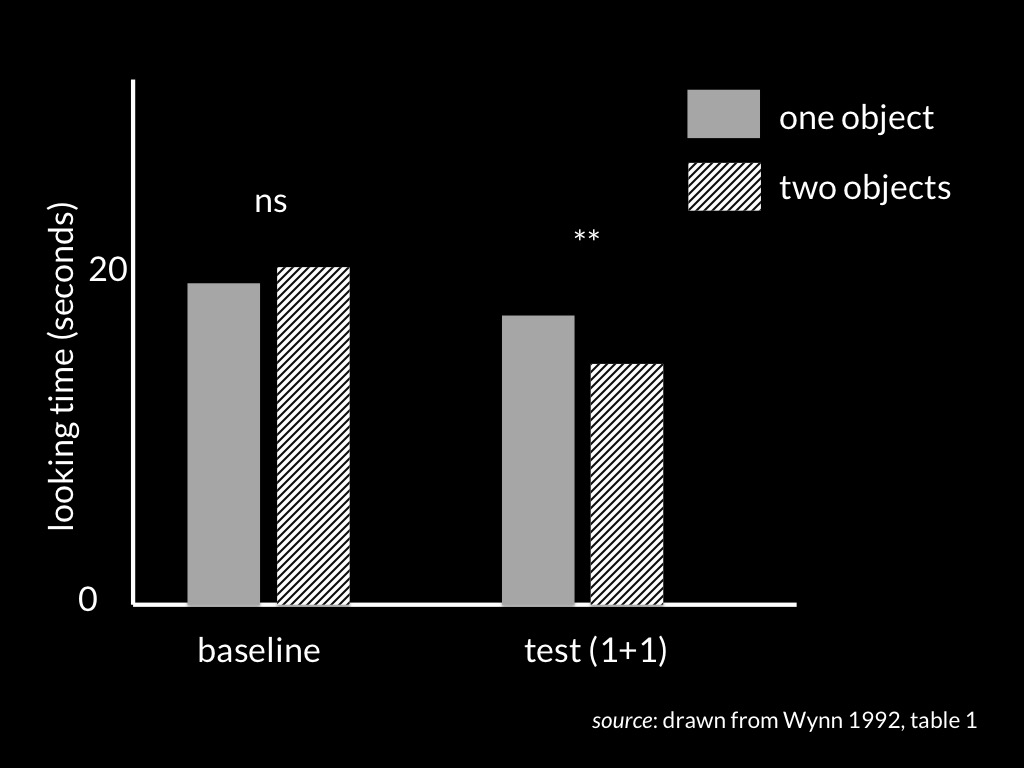

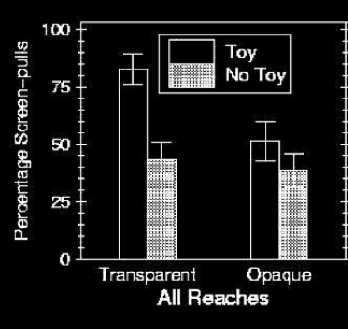

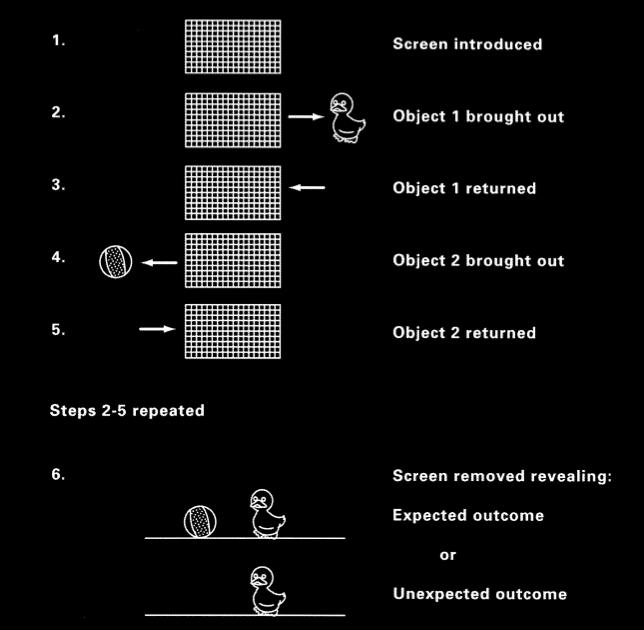

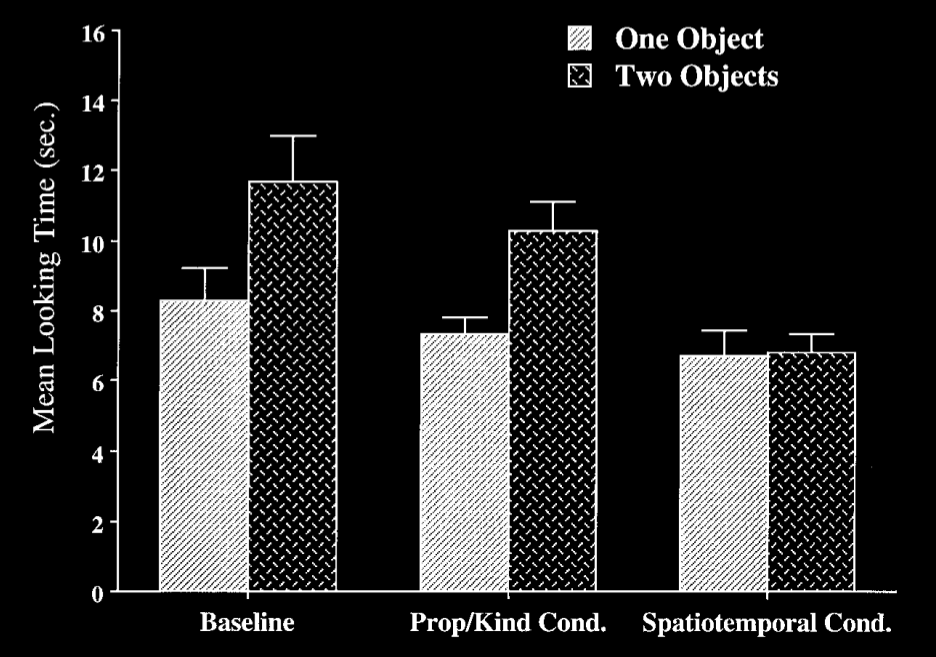

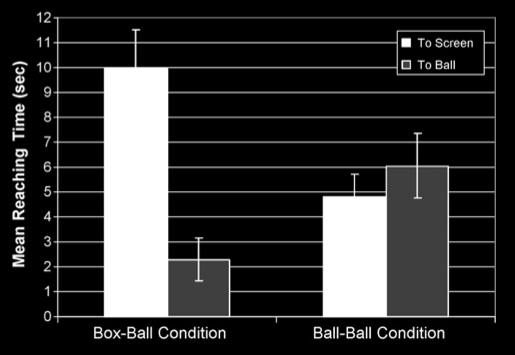

Shinskey & Munakata 2001, figure 1

Shinskey & Munakata 2001, figure 2

| occlusion | endarkening | |

| violation-of-expectations | ✔ | ✘ |

| manual search | ✘ | ✔ |

Charles & Rivera (2009)

‘there is a third type of conceptual structure,

dubbed “core knowledge” ...

that differs systematically from both

sensory/perceptual representation[s] ... and ... knowledge.’

Carey, 2009 p. 10

‘Just as humans are endowed with multiple, specialized perceptual systems, so we are endowed with multiple systems for representing and reasoning about entities of different kinds.’

(Carey and Spelke 1996: 517)

‘core systems are

- largely innate

- encapsulated

- unchanging

- arising from phylogenetically old systems

- built upon the output of innate perceptual analyzers’

(Carey and Spelke 1996: 520)

4- to 6-month-olds can track briefly occluded objects

| scenario | method | source |

| 1 vs 2 objects | habituation | Spelke et al 1995 |

| one unperceived object constrains another’s movement | habituation | Baillargeon 1987 |

| where did I hide it? | violation-of-expectations | Wilcox et al 1996 |

| wide objects can’t disappear behind a narrow occluder | violation-of-expectations | Wang et al 2004 |

| when and where will it reappear? | anticipatory looking | Rosander et al 2004 |

| marker of object maintenanc | EEG | Kaufman et al 2005 |

How?

Crude Picture of the Mind

- epistemic

(knowledge states) - broadly motoric

(motor representations of outcomes and affordances) - broadly perceptual

(visual, tactual, ... representations; object indexes ...)

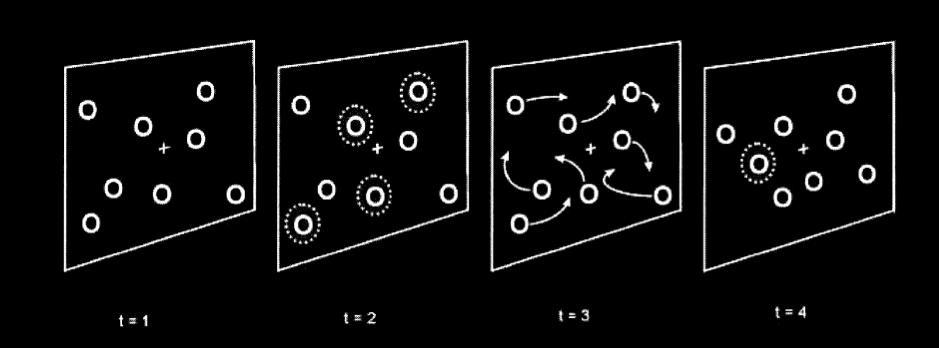

object indexes

Pylyshyn 2001, figure 6

object indexes can survive brief occlusion

modified from Scholl 2007, figure 4

object index assignments can conflict with knowledge states ...

Scholl 2007, figure 4

Functions of object indexes:

✔ influence how attention is allocated

✔ guide ongoing actions (e.g. visual tracking, reaching)

✘ initiate purposive actions

The CLSTX conjecture:

Five-month-olds’ abilities to track briefly unperceived objects

are not grounded on belief or knowledge:

instead

they are consequences of the operations of

a system of object indexes.

Leslie et al (1989); Scholl and Leslie (1999); Carey and Xu (2001)

evidence

behavioural and neural indicators

signature limits

Scholl 2007, figure 4

Carey and Xu 2001, figure 3

Xu and Carey 1996, figure 4

The CLSTX conjecture:

Five-month-olds’ abilities to track briefly unperceived objects

are not grounded on belief or knowledge:

instead

they are consequences of the operations of

a system of object indexes.

Leslie et al (1989); Scholl and Leslie (1999); Carey and Xu (2001)

| occlusion | endarkening | |

| violation-of-expectations | ✔ | ✘ |

| manual search | ✘ | ✔ |

Charles & Rivera (2009)

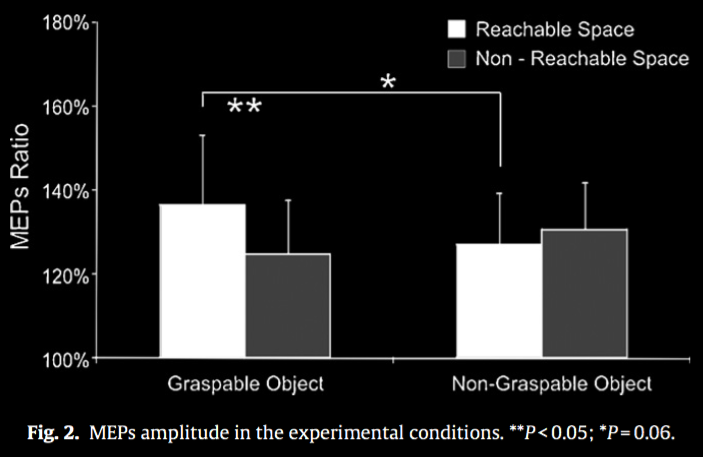

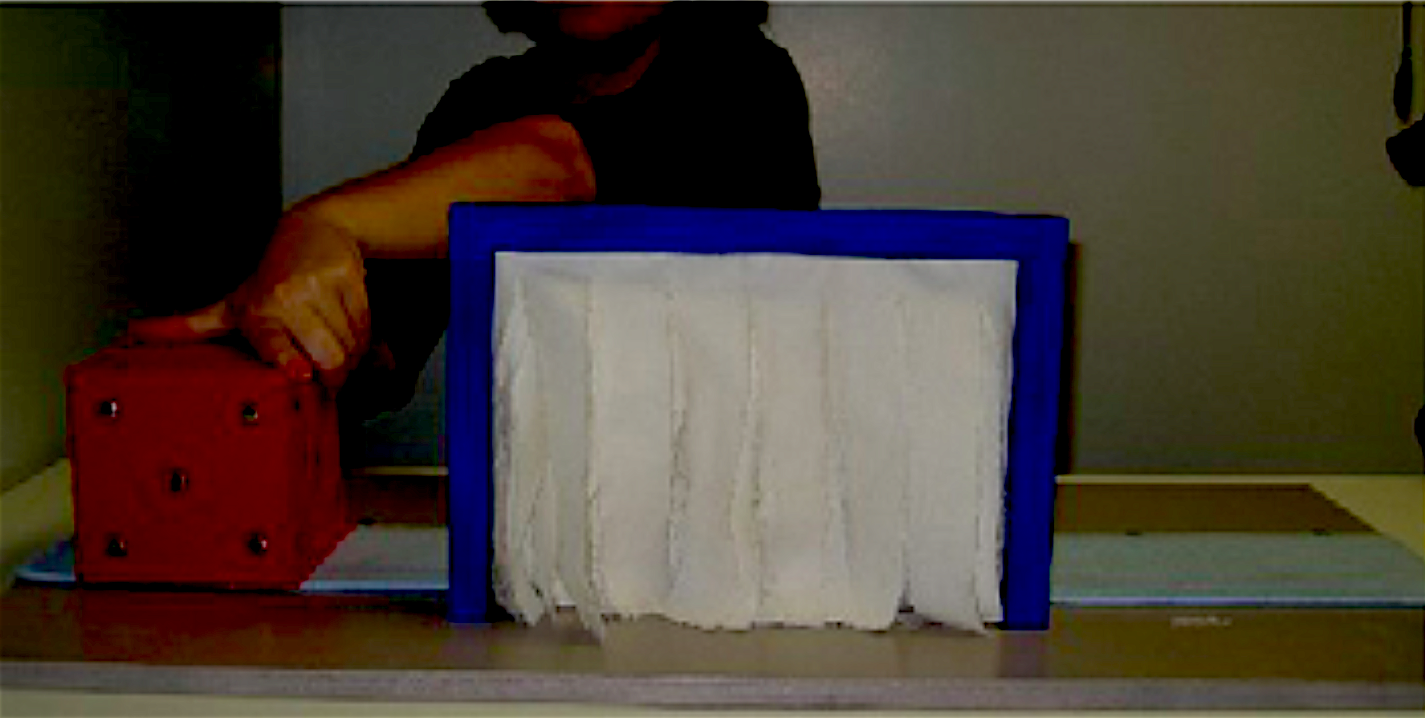

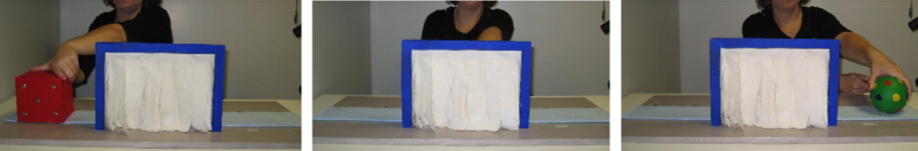

Cardellicchio, Sinigaglia & Costantini, 2011 figure 1

Cardellicchio, Sinigaglia & Costantini, 2011 figure 2

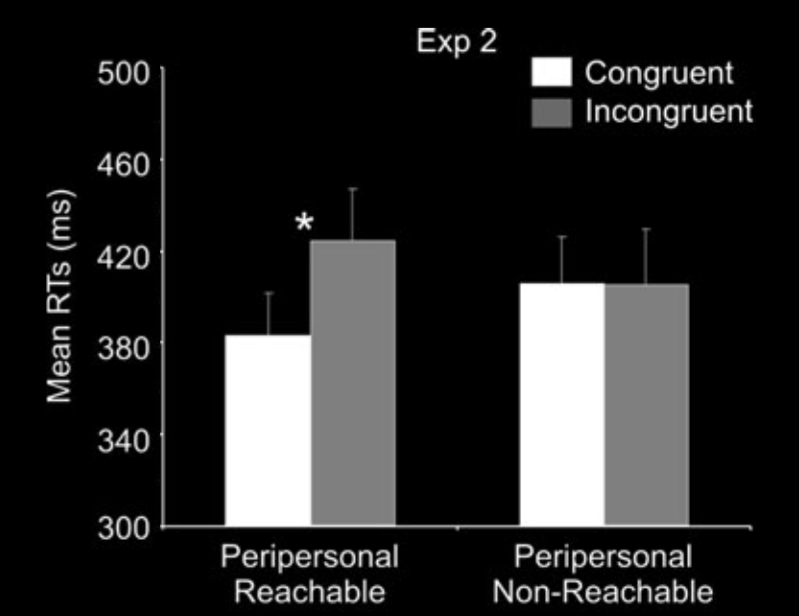

Costantini et al, 2010 figure 1B

Costantini et al, 2010 figure 1B

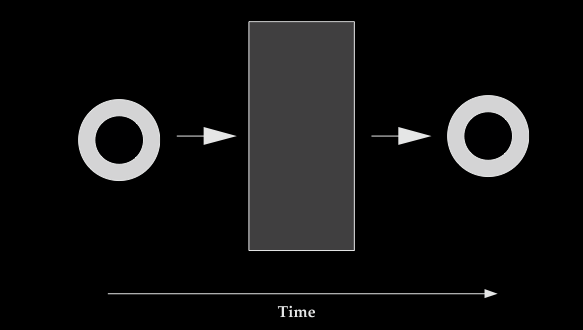

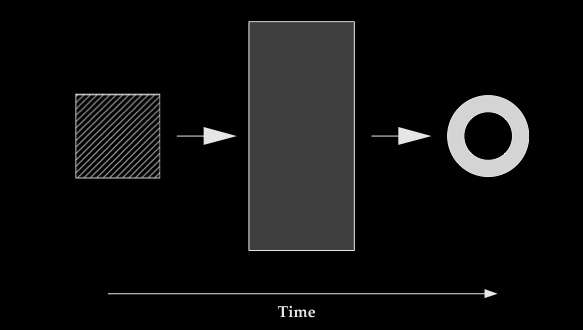

| survive occlusion | survive endarkening | |

| object index | ✔ | ✘ |

| motor representation | ✘ (barrier) | ✔ |

| occlusion | endarkening | |

| violation-of-expectations | ✔ | ✘ |

| manual search | ✘ (barrier) | ✔ |

The CLSTX conjecture:

Five-month-olds’ abilities to track briefly unperceived objects

are not grounded on belief or knowledge:

instead

they are consequences of the operations of

a system of object indexes.

Leslie et al (1989); Scholl and Leslie (1999); Carey and Xu (2001)

... and of a further, independent capacity to track physical objects which involves motor representations and processes.

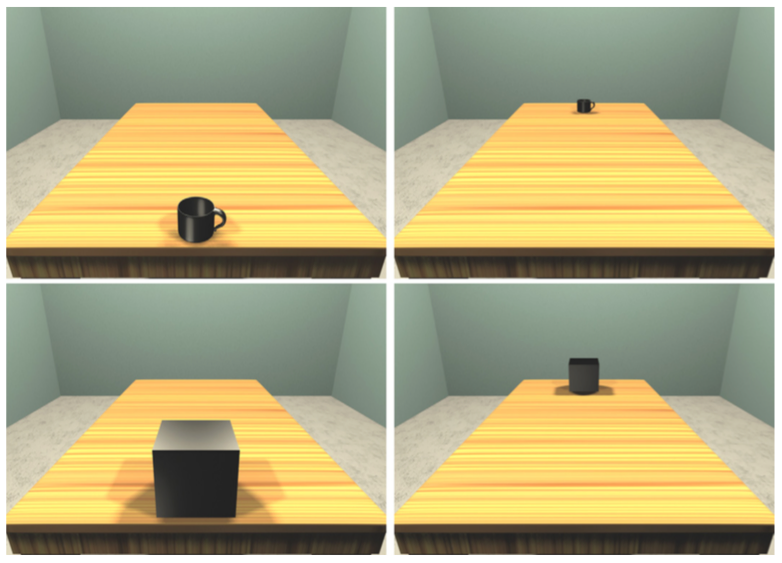

McCurry et al 2009, figure 1 (part)

McCurry et al 2009, figure 1

McCurry et al 2009, figure 2

The CLSTX conjecture:

Five-month-olds’ abilities to track briefly unperceived objects

are not grounded on belief or knowledge:

instead

they are consequences of the operations of

a system of object indexes.

Leslie et al (1989); Scholl and Leslie (1999); Carey and Xu (2001)

... and of a further, independent capacity to track physical objects which involves motor representations and processes.

A Question:

What can object indexes explain?

| occlusion | endarkening | |

| violation-of-expectations | ✔ | ✘ |

| manual search | ✘ | ✔ |

Charles & Rivera (2009)

Functions of object indexes:

✔ influence how attention is allocated

✔ guide ongoing actions (e.g. visual tracking, reaching)

✘ initiate purposive actions

Wynn 1992, fig 1 (part)

object index operations

↓

? ? ? metacognitive feelings

↓

patterns in looking durations

‘metacognitive feelings ... allow a transition from the implicit-automatic mode to the explicit-controlled mode of operation.’

Koriat, 2000 p. 150

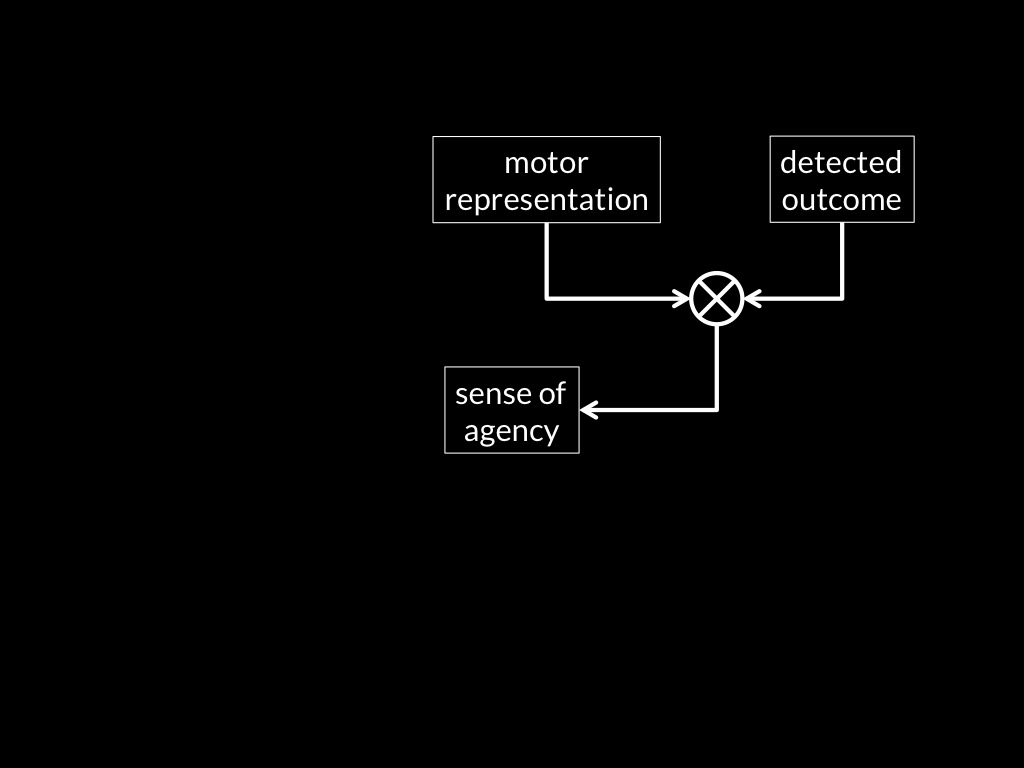

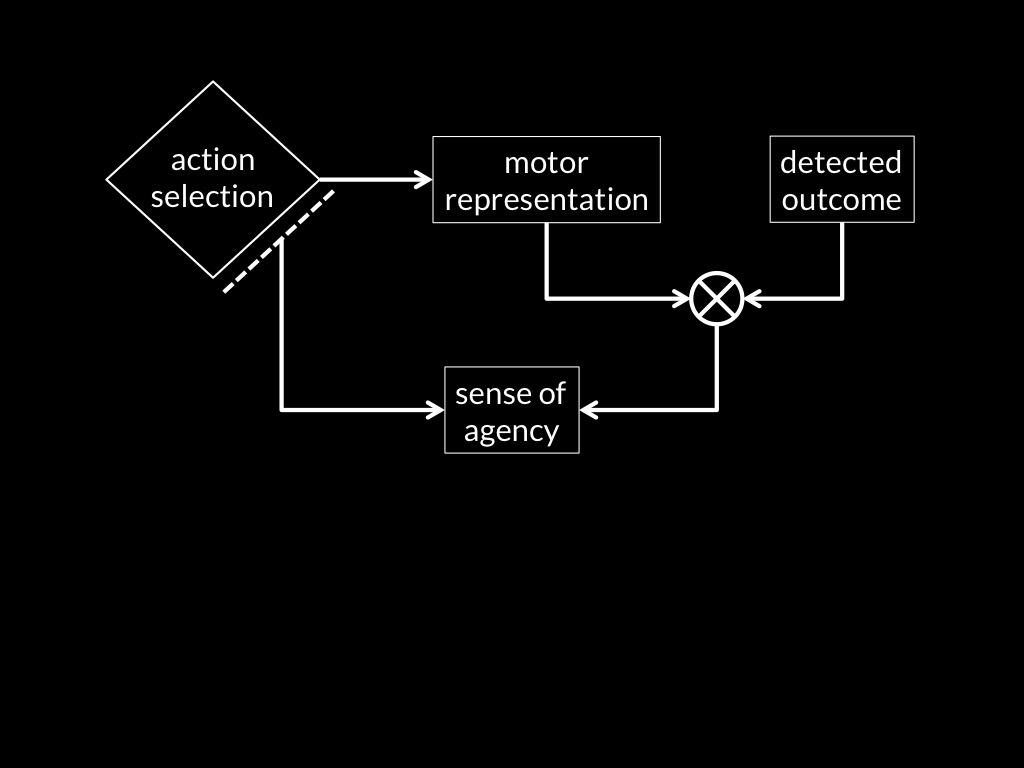

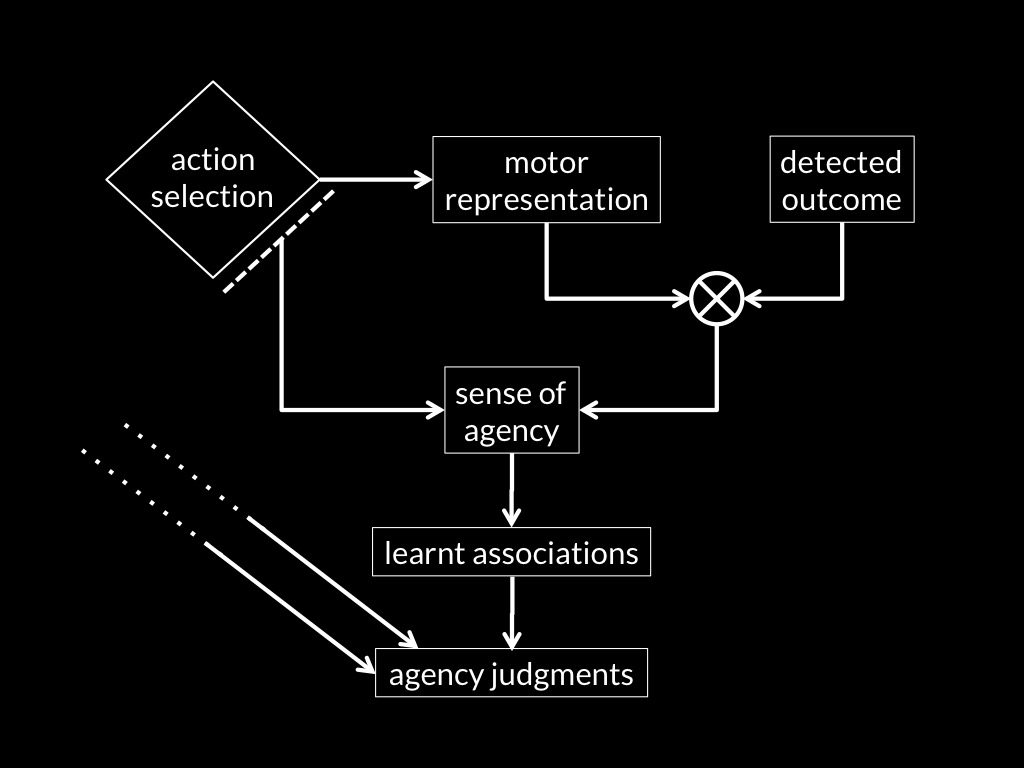

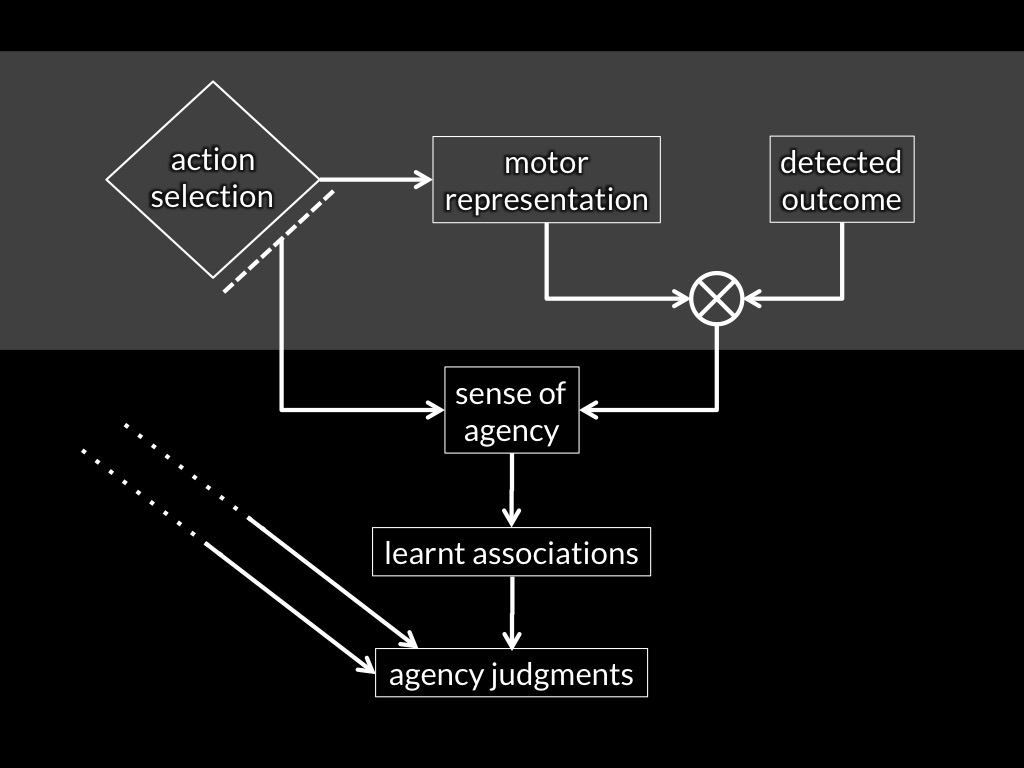

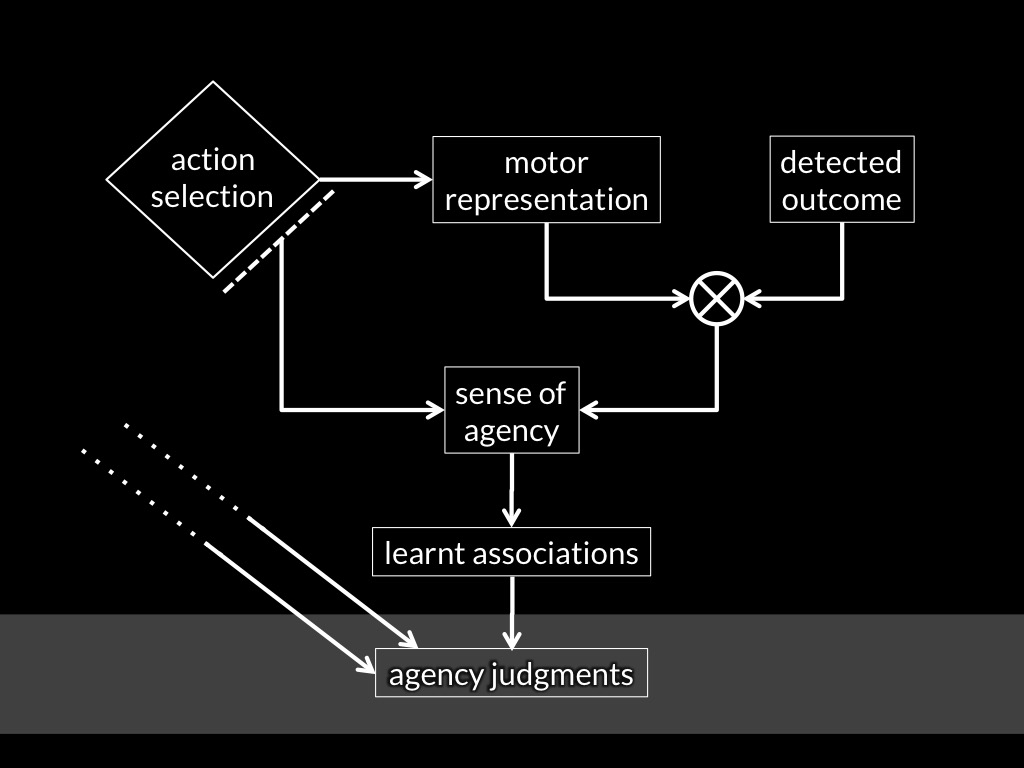

Adapted from Sidarus & Haggard, 2016 figure 5

Adapted from Sidarus & Haggard, 2016 figure 5

Metacognitive feelings

There are aspects of the overall phenomenal character of experiences which their subjects take to be informative about things that are only distantly related (if at all) to the things that those experiences intentionally relate the subject to.

Metacognitive feelings

can be thought of as

sensations.

Sensations are

- monadic properties of perceptual experiences

- individuated by their normal causes

- (so they do not involve an intentional relation)

- which alter the overall phenomenal character of those experiences

- in ways not determined by the experiences’ contents.

metacognitive feelings trigger beliefs only via associations.

metacognitive feelings

Thereare aspects of the overall phenomenal character of experiences which their subjects take to be informative about things that are only distantly related (if at all) to the things that those experiences intentionally relate the subject to.

Wynn 1992, fig 1 (part)

feeling of surprise

‘the intensity of felt surprise is [...] influenced by [...]

the degree of the event’s interference with ongoing mental activity’

Reisenzein et al, 2000 p. 271; cf. Touroutoglou & Efklides, 2010

object index operations

↓

? ? ? metacognitive feelings

↓

patterns in looking durations

Objection

If object index operations produce metacognitive feelings,

wouldn’t these generate knowledge about object locations?

(And so generate the incorrect predictions that flow from ascribing knowledge of object locations?)

object index operations

↓

? ? ? metacognitive feelings

↓

patterns in looking durations

conclusion

Q1 What is the nature of infants’ earliest cognition of physical objects?

‘there is a third type of conceptual structure,

dubbed “core knowledge” ...

that differs systematically from both

sensory/perceptual representation[s] ... and ... knowledge.’

Carey, 2009 p. 10

Crude Picture of the Mind

- epistemic

(knowledge states) - broadly motoric

(motor representations of outcomes and affordances) - broadly perceptual

(visual, tactual, ... representations; object indexes ...) - metacognitive feelings

(connect the motoric and perceptual to knowledge)

Q2 How do you get from these early forms of cognition to knowledge of simple facts about particular physical objects?

The Assumption of Representational Connections

The transition involves operations on the contents of representations, which transform them into (components of) the contents of knowledge states.

Conjecture

(and behaviours, and other intentional isolators)

connect early-developing processes for tracking objects, causes, actions and minds

to the epistemic.

synchronic

diachronic

object index operations

↓

metacognitive feelings

↓

patterns in looking durations

object indexes + metacognitive feelings

↓

? ? ?

↓

knowledge of objects

Development is (re)discovery.